You Don't Ship a CPU: Why the Harness Became the Product

The most consequential insight in coding agent development is a counterintuitive one: the model matters less than the infrastructure wrapping it. Phil Schmid's analogy -- the model is the CPU, the harness is the operating system -- captures a fundamental shift in how the industry thinks about AI coding tools. This explains why Claude Code, Cursor, and Copilot can use overlapping foundation models yet deliver starkly different developer experiences and satisfaction ratings.

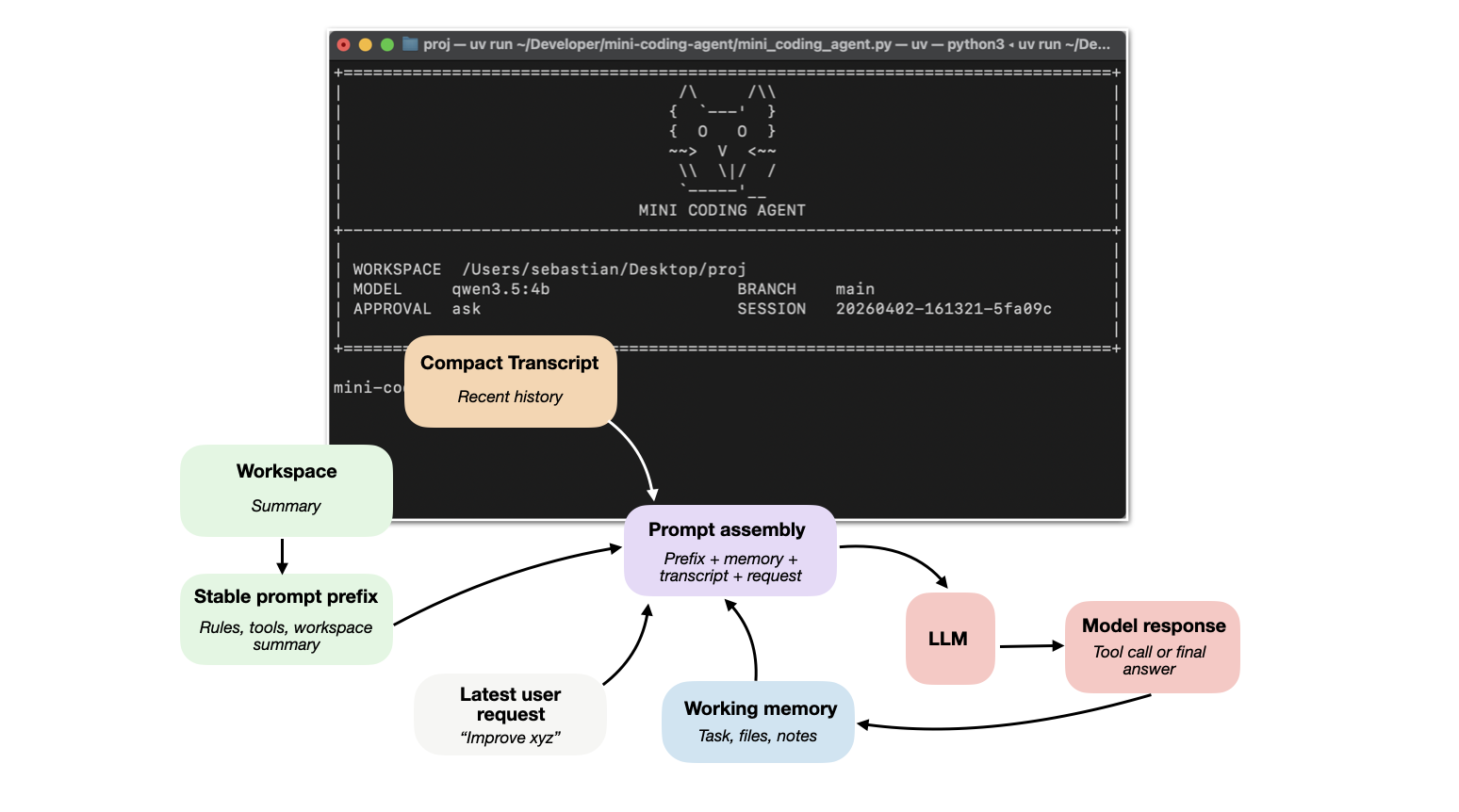

The harness encompasses everything the developer never sees but always feels: how repository context is gathered and compressed, how tools are validated and permissioned, how conversation history is compacted when context windows fill up, and how long-running sessions maintain continuity. Anthropic's published engineering guidance reveals that their approach to session continuity uses an Initializer Agent that reads progress notes and git history before handing off to a Coding Agent -- a pattern that solves the common problem of agents forgetting what they were doing after context resets. Sebastian Raschka's six-component taxonomy formalizes these concerns into a reference architecture: Live Repo Context, Prompt Shape and Cache Reuse, Structured Tools with Validation and Permissions, Context Reduction and Output Management, Transcripts/Memory/Resumption, and Delegation with Bounded Subagents. The taxonomy signals that coding agent development has matured from ad-hoc experimentation into an engineering discipline with identifiable, repeatable components.