The Infrastructure Play: Why AWS Bets on Both Horses Instead of Picking One

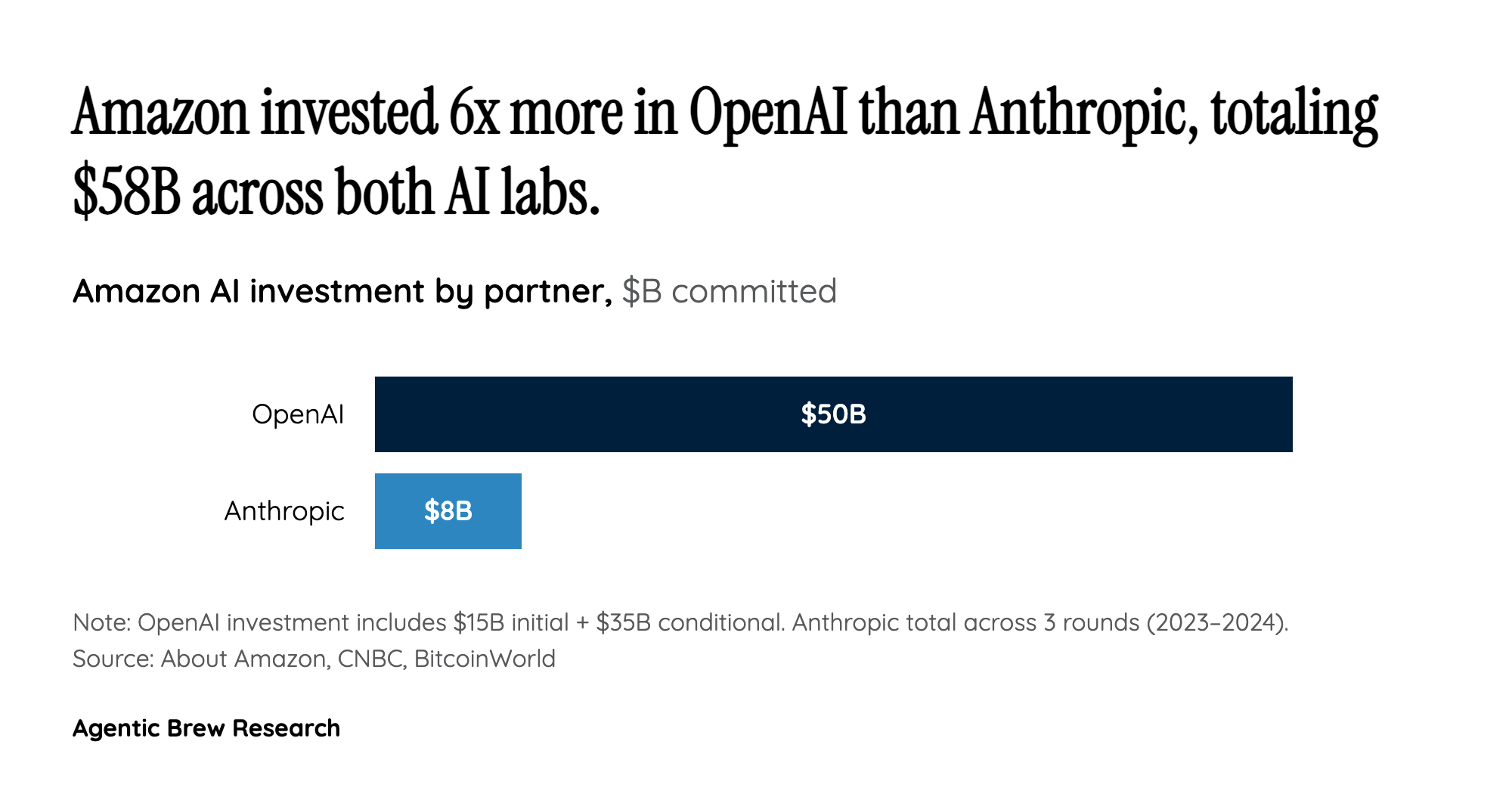

Amazon's decision to invest $8B in Anthropic and up to $50B in OpenAI appears contradictory only if you view AWS as an AI model company. It makes perfect sense if you view AWS as what it actually is: a cloud infrastructure utility that profits from compute consumption regardless of which model wins. Matt Garman's defense at HumanX 2026 boiled down to a single logic: 'Last I checked, the internet still is pretty big.' The market for AI inference and training is expanding so rapidly — projected at $2.5 trillion globally in 2026 with 44% year-over-year growth, per Garman's own HumanX presentation — that AWS gains more from ensuring both leading labs run on its silicon than from picking a champion.

The mechanics reveal the strategy's elegance. OpenAI's $100B expanded cloud agreement over eight years commits 2 GW of Trainium capacity to AWS. Anthropic's dedicated 1,200-acre Indiana data center houses 500,000 Trainium2 chips. Both deals lock major AI workloads into Amazon's custom silicon pipeline, reducing dependence on Nvidia GPUs and creating a vertically integrated compute moat. Garman's pledge — 'We've promised them we won't give ourselves unfair competitive advantage' — is the necessary diplomacy, but the real competitive advantage isn't in model favoritism; it's in making Trainium the default training substrate for frontier AI.

Social media engagement patterns reinforce that the public grasps the infrastructure angle. A CNBC YouTube video titled 'No Nvidia Chips Needed! Amazon's New AI Data Center For Anthropic Is Truly Massive' pulled 1.26 million views and 13,000 likes — dwarfing the Bloomberg and CNBC coverage of the OpenAI financial deal (41,900 and 30,100 views respectively). On X.com, @AWSNewsroom's post about AWS's full-stack AI approach drew 364 engagements, while @moneymoverscnbc's clip quoting 'not just one winner' earned 219 likes.