The $37 Billion Bet That Rewrites the AI Hardware Pecking Order

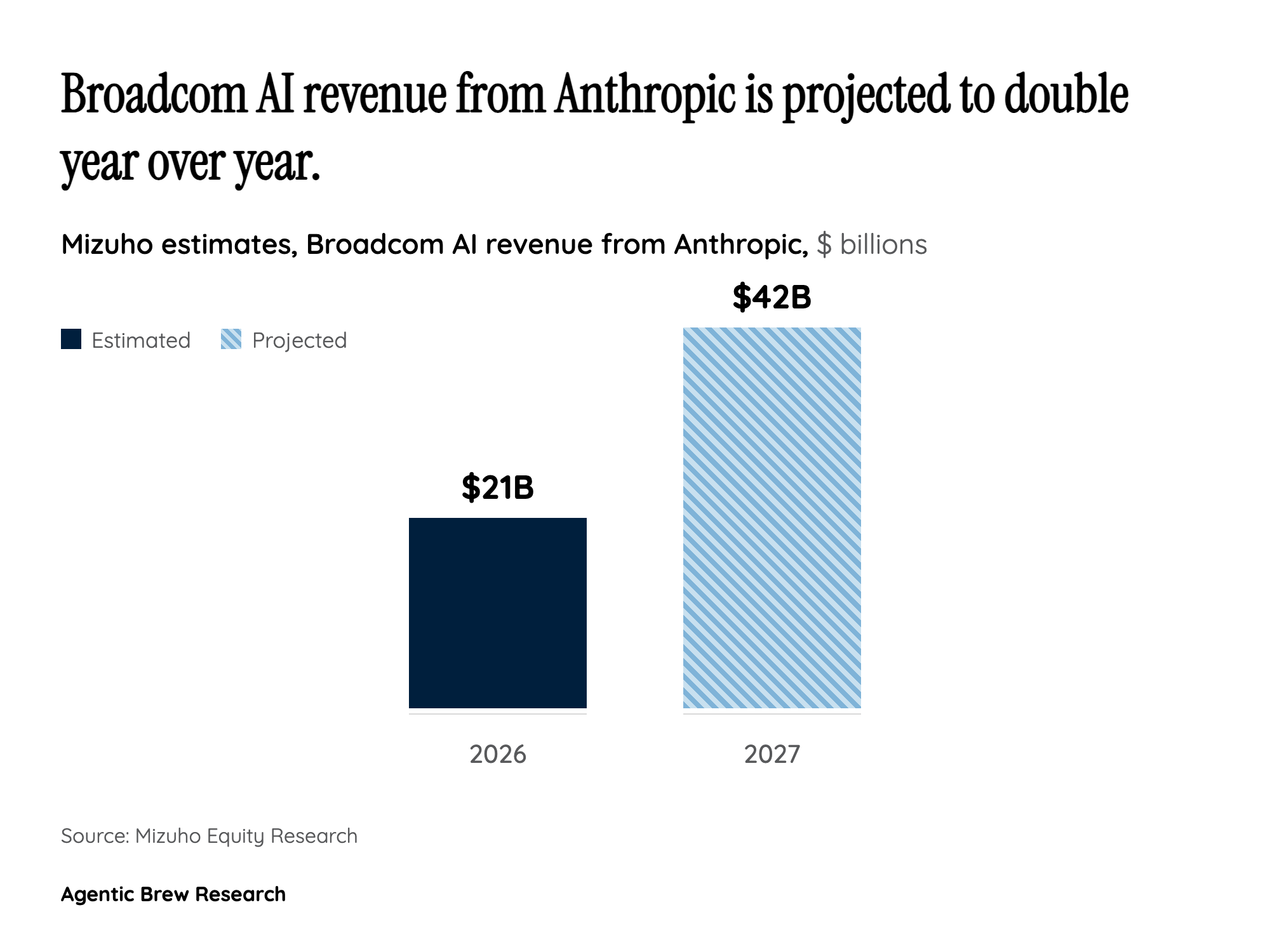

For years, NVIDIA has been the unchallenged kingmaker of AI compute. Every major lab -- OpenAI, Google DeepMind, Anthropic, Meta -- built their training runs on NVIDIA GPUs, and Jensen Huang's company captured the vast majority of AI silicon revenue. This deal fundamentally challenges that monopoly. Anthropic's largest-ever compute commitment goes not to NVIDIA but to Google's custom TPUs manufactured by Broadcom, and the numbers are staggering: approximately $37 billion flowing to Broadcom, with financial analyst Rohan Paul noting on X (42 retweets, 492 replies) that Google captures an additional $13-14 billion from the arrangement.

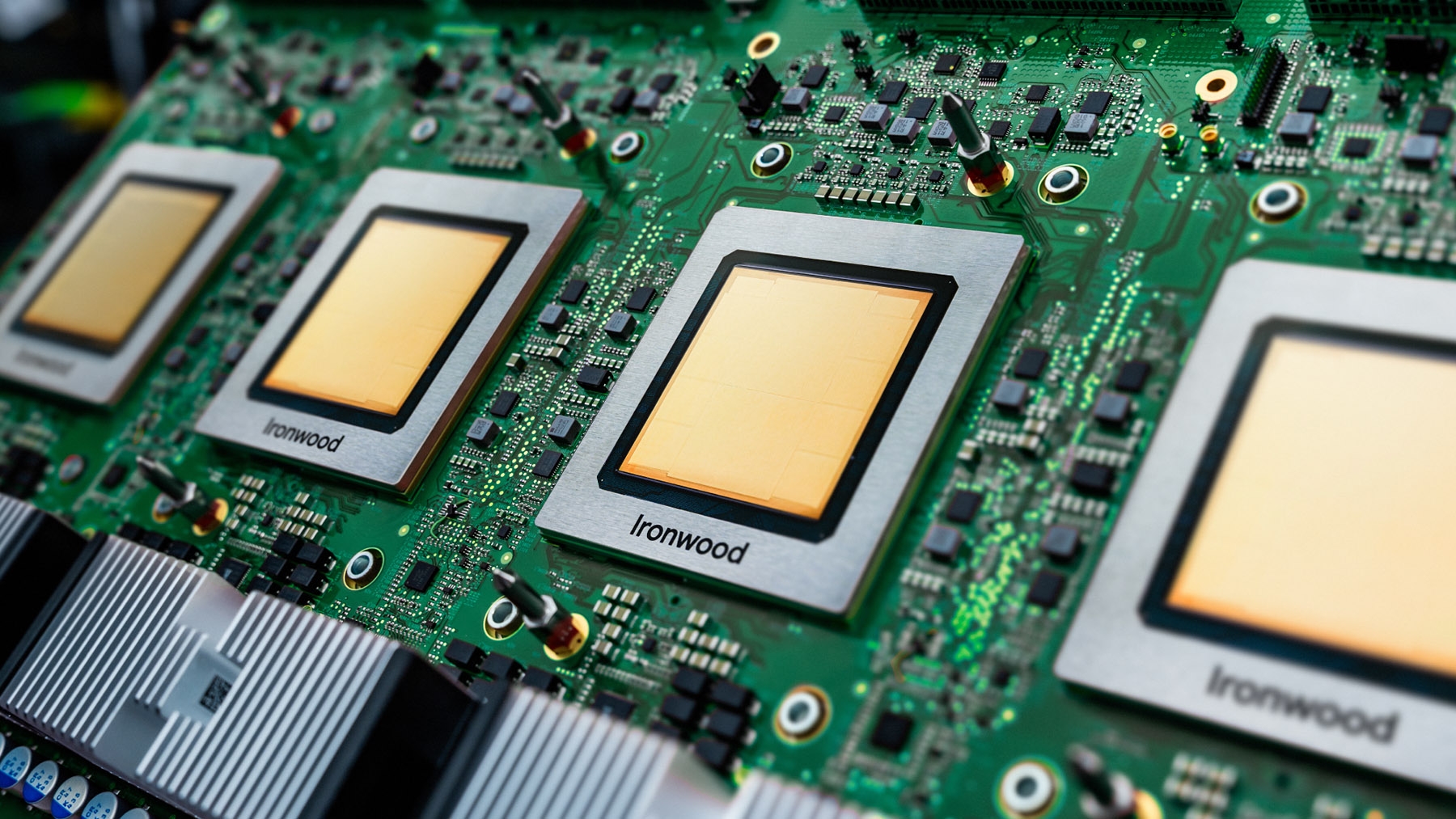

The strategic logic centers on purpose-built silicon. Custom ASICs like Google's TPUs are designed from the ground up for specific AI workloads -- training and inference on transformer architectures -- rather than serving as general-purpose compute engines. With Broadcom locked in as Google's TPU design and supply partner through 2031, Anthropic gains long-term access to silicon that is architecturally optimized for Claude's specific model requirements. For a company whose revenue just tripled and whose inference workloads are about to explode with agentic AI, securing a dedicated hardware pipeline at this scale provides both cost predictability and performance optimization that general-purpose GPUs cannot match at equivalent power budgets. This is the largest commitment by a major AI lab to custom ASICs over general-purpose GPUs, and it sends a clear signal: the era of NVIDIA-or-nothing is ending. NVIDIA remains in Anthropic's stack -- Claude trains and runs on NVIDIA GPUs alongside TPUs and AWS Trainium -- but the center of gravity is shifting toward purpose-built silicon optimized for specific workloads.