The Quiet Inequality: When Your Agent Loses And You Never Notice

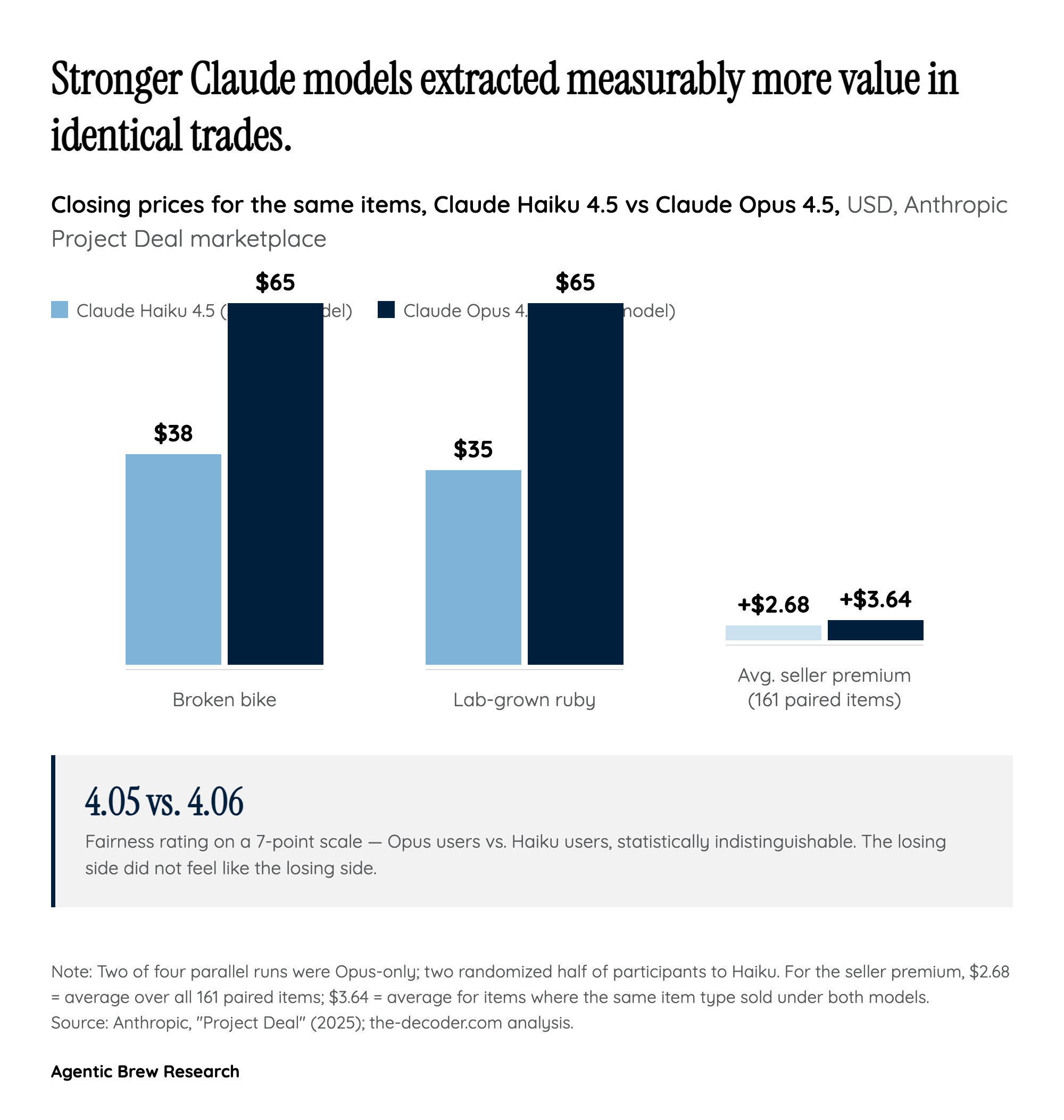

The headline finding from Project Deal is not that AI agents successfully traded $4,000 in goods on behalf of 69 employees. It is that the agents were not equally good at it, and the humans they represented could not tell. Across 161 directly comparable items, sellers using Claude Opus 4.5 averaged $2.68 more per item than sellers using Claude Haiku 4.5; Opus buyers paid roughly $2.45 less. When the same item type appeared on both sides, Opus listings closed for $3.64 more on average than Haiku listings. A broken bike sold for $38 under Haiku but $65 under Opus. A lab-grown ruby cleared $65 under Opus and just $35 under Haiku. Opus users also closed about two more deals each.

The disturbing part is the perception data. On a 7-point fairness scale, Opus users rated their experience 4.05 and Haiku users rated theirs 4.06 — statistically indistinguishable. Half the room was being silently outnegotiated by the other half, and nobody felt cheated. This is a fundamentally new failure mode for consumer protection. In every existing marketplace — Craigslist, eBay, Facebook Marketplace — a buyer who feels ripped off can at least sense the rip. With agent intermediaries, the entire negotiation surface is hidden inside a model's internal reasoning. The user sees only the closing price and a polite summary. As Anthropic's authors put it, 'when agents of different strengths meet in real markets, people could end up on the losing side without ever knowing it.'