The Bottleneck Quietly Moved From Capital to Memory

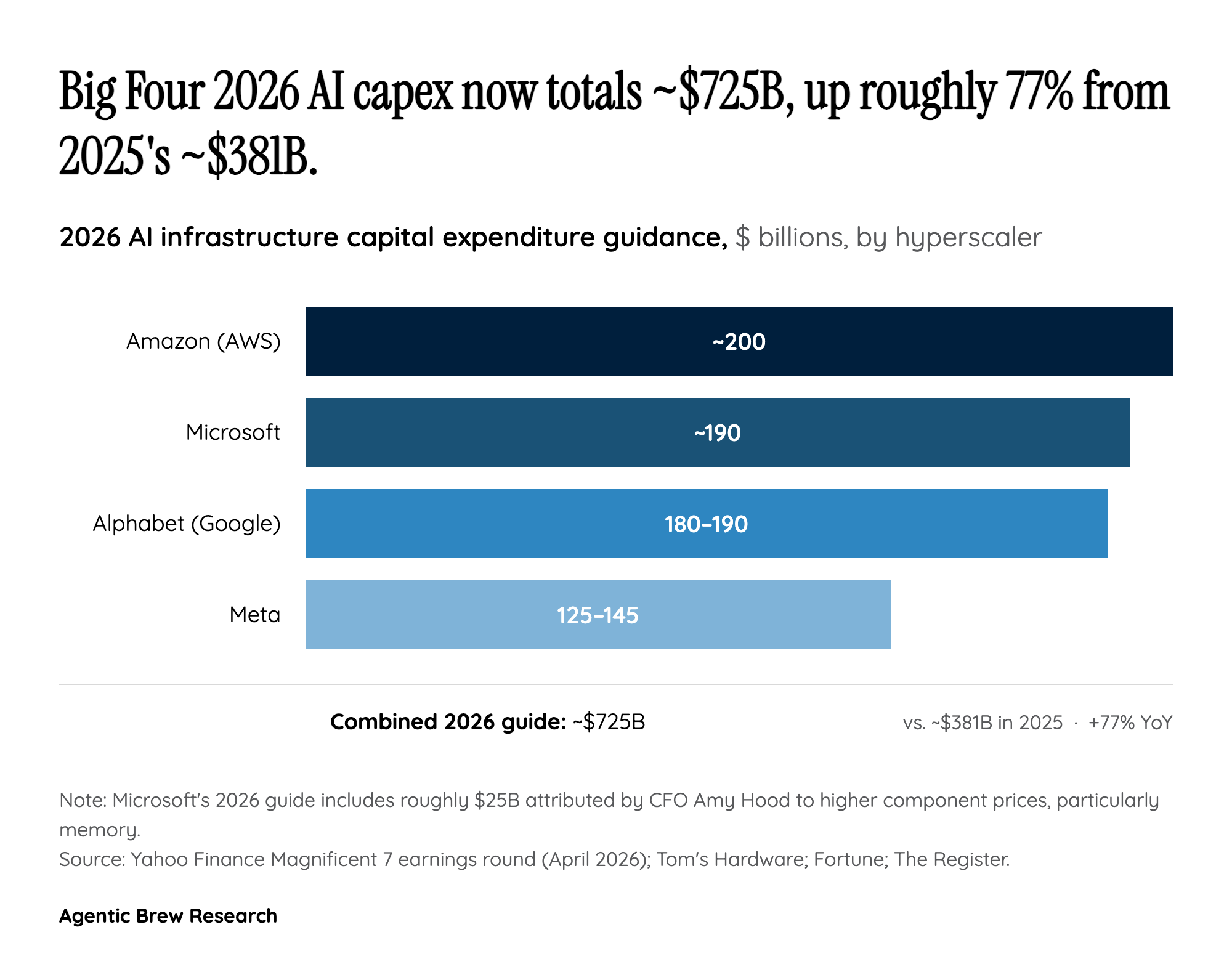

The most revealing line in Microsoft's Q3 FY2026 disclosure is not the headline $190 billion. It is the $25 billion. CFO Amy Hood told investors that roughly that much of the upward revision — essentially the entire surprise versus a $152-$154 billion consensus — is not extra capacity, just higher prices for the same components. Memory and storage have, in The Register's phrasing, 'skyrocketed' since late 2025, with some grades more than tripling in price as AI infrastructure demand outstrips supply.

That reframes the story. For two years the popular narrative around hyperscaler capex was a capital arms race: who could write the biggest check to GPU vendors. The new bottleneck is upstream of the GPU. High-bandwidth memory (HBM) — the stacked DRAM that sits next to an accelerator and feeds it data — is now the binding constraint on how fast Microsoft can stand up AI capacity. Microsoft is paying more, getting the same boxes, and still telling Wall Street it expects to remain capacity-constrained at least through 2026.

For builders, that has a concrete consequence: expect Azure AI capacity to stay tight, expect quota gates on the most popular GPU SKUs to persist, and expect the next round of pricing power in the AI stack to flow not to model labs or cloud providers but to the handful of vendors who can fab HBM3 stacks at scale. The shift in margin from buyer to component supplier is the single most underappreciated structural change in this earnings cycle.