The circularity question: $25B out, $100B+ back in

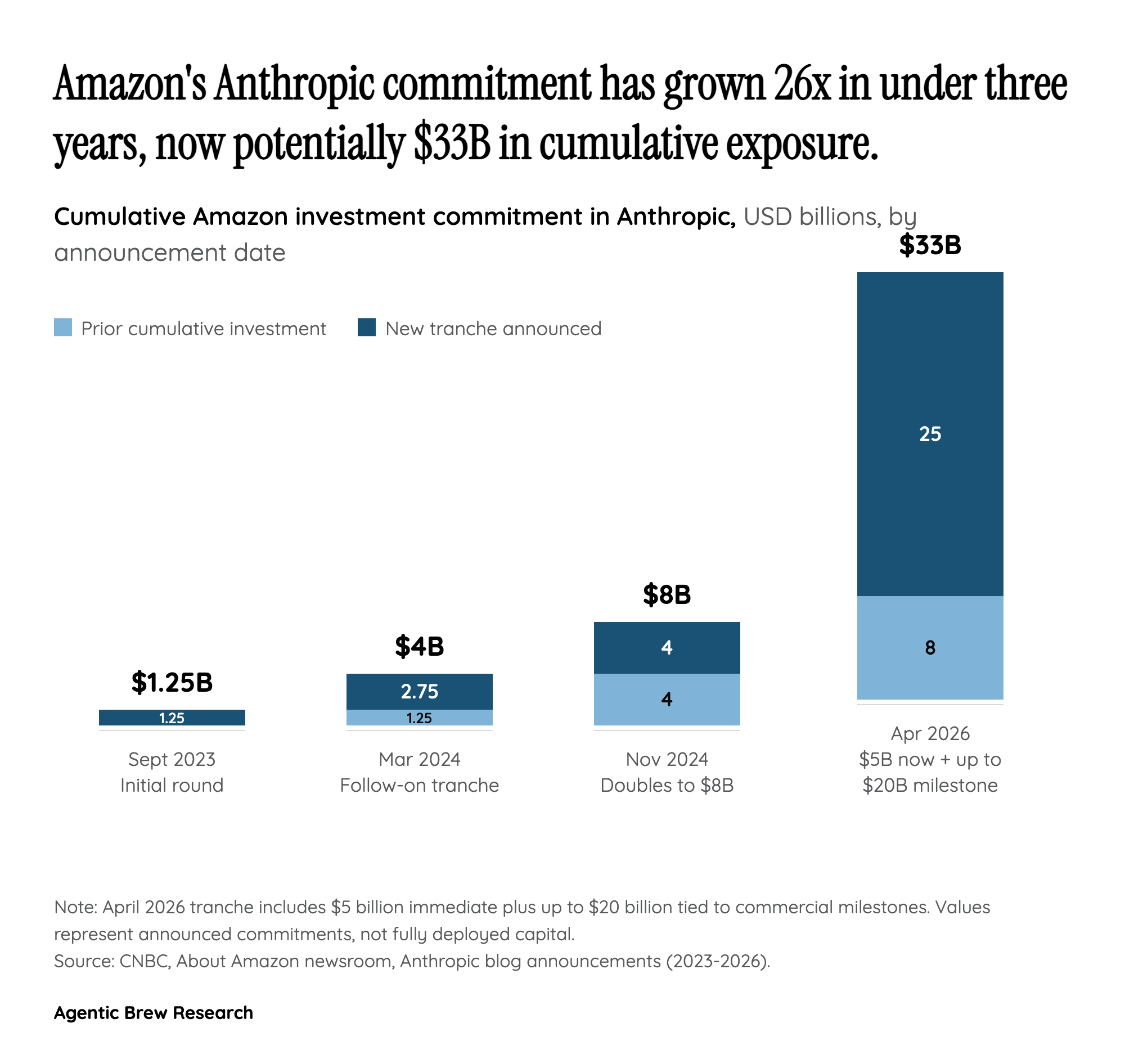

On paper, Amazon is writing Anthropic a check for up to $25 billion — $5 billion immediately and up to $20 billion more tied to commercial milestones, on top of the $8 billion already deployed. In the same announcement, Anthropic commits more than $100 billion over the next decade to AWS technologies and secures up to 5 gigawatts of Trainium capacity. The net direction of cash is unmistakable: Amazon sends money out through equity, and Anthropic sends substantially more back through a cloud contract pegged to Amazon's own silicon roadmap.

Retail communities caught the structural oddity almost immediately. On Reddit, investors frame the arrangement as 'vendor financing' and a 'circular financing loop,' arguing that both parties get to book favorable numbers on money that never fully leaves the building. TechCrunch flags the same concern at an institutional level — that a $25B investment paired with $100B+ of contracted cloud spend raises the circular-revenue question analysts have already been asking about the Nvidia-OpenAI-Microsoft flows. A contrarian camp counters that Anthropic is genuinely driving incremental AWS consumption, making the equity stake a real option on a real business. The deal's legitimacy will ultimately hinge on whether Anthropic's workloads scale independently of Amazon's capital — and whether the milestone-linked $20B tranche disburses on organic commercial triggers or on terms that look suspiciously like a rebate.