The Latency Wall That Just Came Down

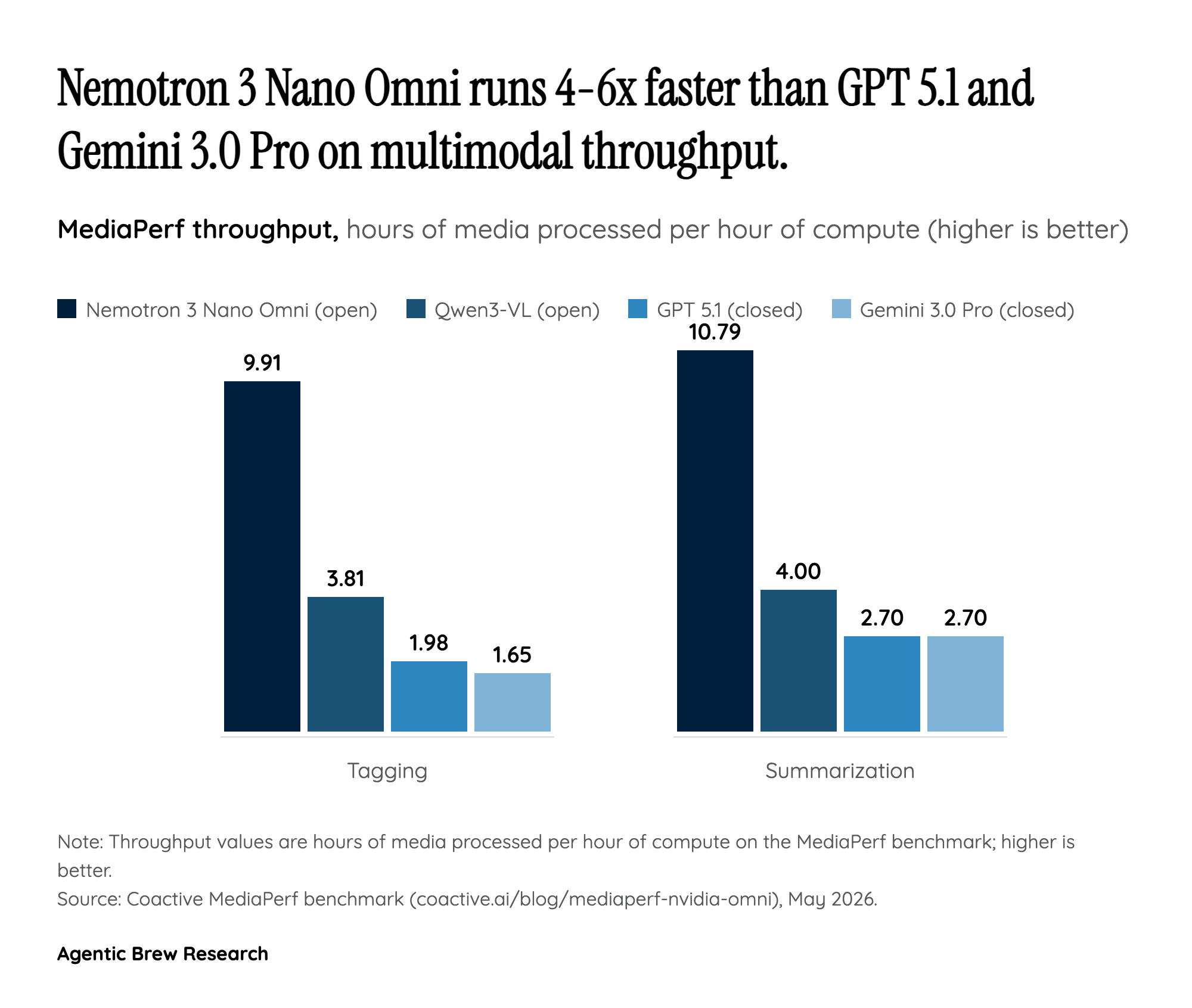

The most important number in the Nemotron 3 Nano Omni release is not a parameter count — it is the OSWorld jump from 11.1 to 47.4 on the GUI-agent benchmark, a 4.3x improvement that reframes what computer-use agents can credibly attempt. H Company CEO Gautier Cloix is explicit that this is a categorical change, not a smooth improvement: 'To build useful agents, you can’t wait seconds for a model to interpret a screen.' Until now, screen-aware agents have been bottlenecked by the round-trip cost of a vision-language model parsing each frame, which forced product teams to either downsample to thumbnails (losing button-level precision) or accept multi-second latency that breaks the interaction loop.

Omni's claim is that interpreting full-HD screen recordings in real time is finally tractable on a single open model with 3B active parameters. That economic argument matters more than any single benchmark: the cost of running a perception loop scales linearly with active parameters, not total parameters, so a 30B-A3B MoE running at the throughput of a 3B dense model can power thousands of concurrent agent sessions per GPU. If H Company's deployment generalizes, the gating constraint on browser/computer-use agents shifts from raw model capability to scaffolding, memory, and recovery — engineering problems, not research ones.