A Roblox Cheat, a Stealer, and an OAuth Token: The Improbable Kill Chain

The most striking part of this incident isn't the $2 million ransom listing on BreachForums — it's how cheaply the attack started. In February 2026, according to Hudson Rock's forensic write-up surfaced by Infostealers.com, a Context.ai employee with sensitive access downloaded Roblox 'auto-farm' scripts onto a corporate laptop. The bundled Lumma infostealer did exactly what Lumma is built to do: it waited for real human mouse movement to evade sandbox detection, called Windows APIs directly to dodge EDR hooks, and then exfiltrated a carefully curated bundle of corporate secrets — Google Workspace cookies, Supabase keys, Datadog credentials, and Authkit tokens — in one pass.

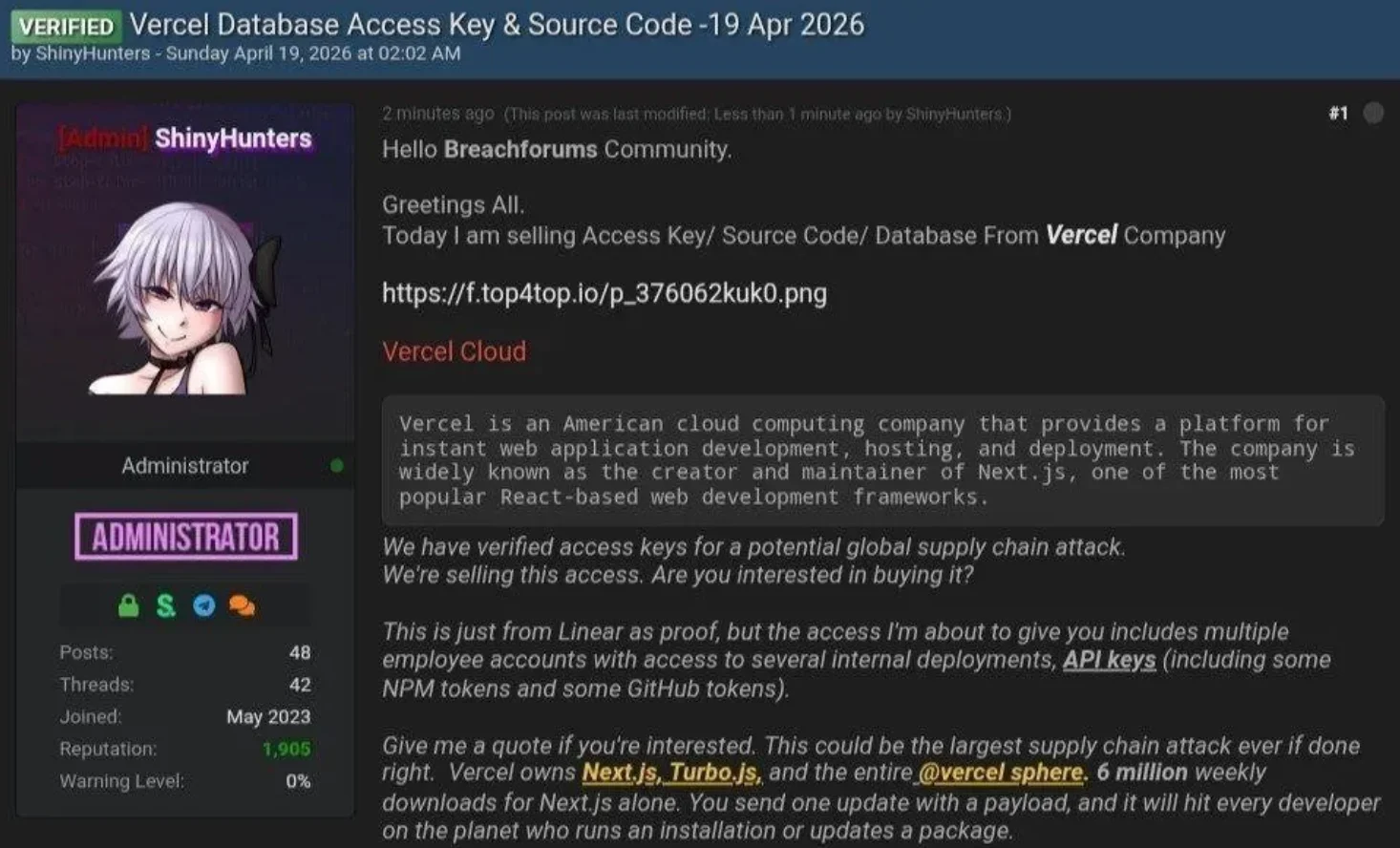

From there, the pivot is a two-hop story that SaaS security has been warning about for three years. The attackers used stolen Context.ai credentials to reach Context.ai's AWS environment in March, and then leaned on the fact that Context.ai's AI Office Suite had been connected to a Vercel employee's Google Workspace via OAuth with broad consent. That OAuth trust relationship — explicitly made by an employee, with no malware on any Vercel machine — became the bridge into Vercel's internal environments, including Linear, GitHub, and the NPM/env-var surface. This is why Guillermo Rauch's public line that the attackers were 'significantly accelerated by AI' lands awkwardly in developer threads: the decisive step wasn't an AI exploit, it was a game cheat on a vendor laptop cashing in a standing OAuth grant.