The Error Rate Chasm: Why AI May Be Quantum Computing's Only Viable Bridge

The most underappreciated number in the Ising announcement is the gap between where quantum hardware stands and where it needs to be. Today’s best quantum processors produce an error roughly once every 1,000 operations. Useful quantum applications — drug discovery, cryptography, complex optimization — require error rates of approximately one in one trillion. That is a six-order-of-magnitude gap, and closing it through hardware improvements alone would require decades of iterative engineering on qubit coherence, gate fidelity, and environmental isolation.

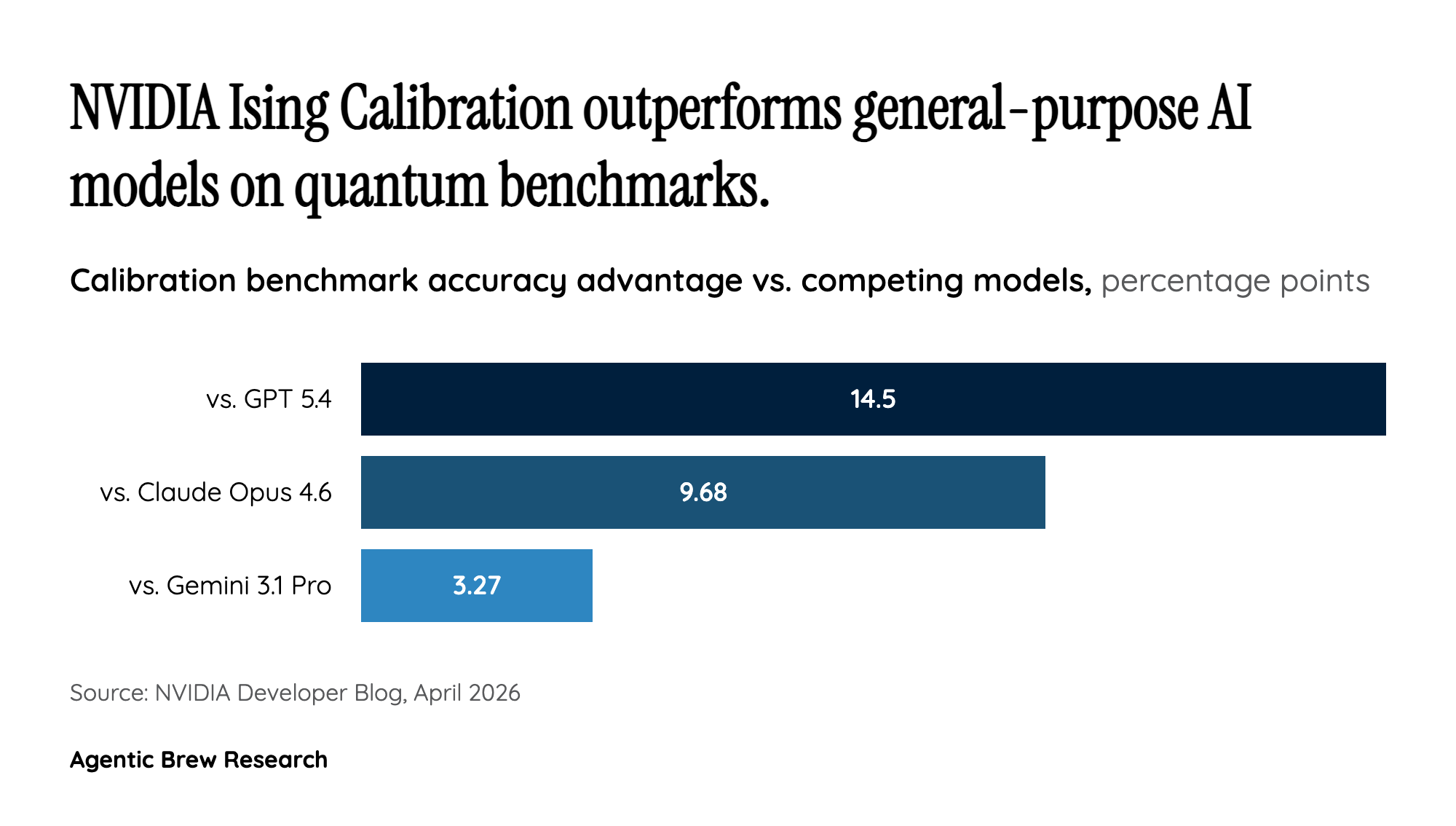

This is where Ising’s approach becomes significant. Rather than waiting for perfect qubits, NVIDIA is betting that AI-driven error correction can bridge the gap computationally. The Ising Decoding models, despite being remarkably small (912K and 1.79M parameters), achieve 2.5x speed and 3x accuracy improvements over pyMatching, the existing standard. The projected latency of 2.33 microseconds per round — potentially dropping to 0.11 microseconds with 13 GB300 GPUs running FP8 — approaches the real-time requirements for continuous error correction during quantum computation. If these numbers hold in production, AI-powered decoding could effectively buy the quantum industry years of runway while hardware catches up.