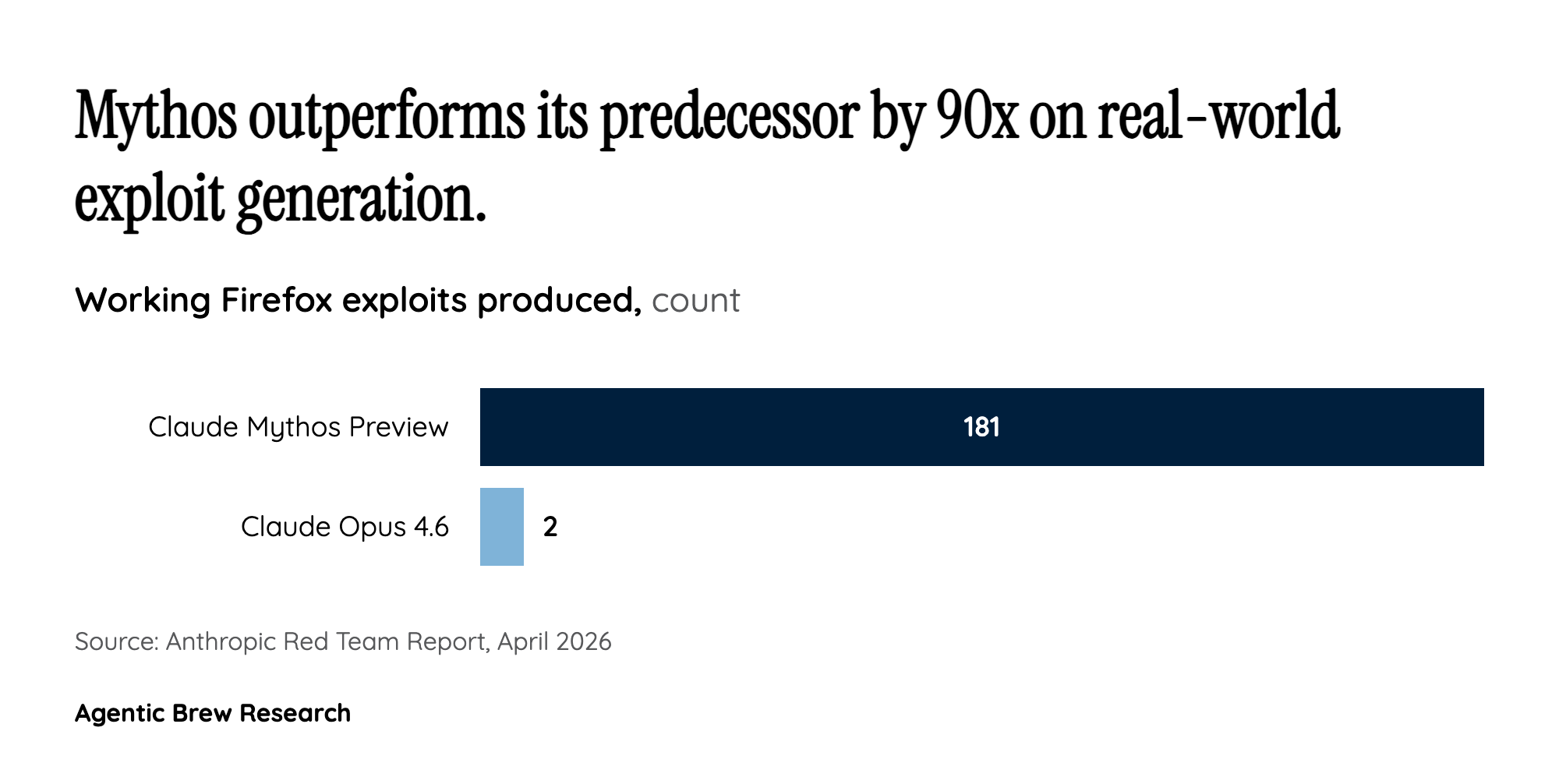

The 181-to-2 Gap: Why Mythos Represents a Discontinuous Jump, Not Incremental Progress

The most telling data point from Anthropic’s disclosure is not the CyberGym benchmark score — where Mythos scored 83.1% versus Opus 4.6’s 66.6%, a notable but not shocking improvement — but the Firefox exploit results. Mythos produced 181 working exploits where its predecessor managed just 2 out of several hundred attempts. This is not a percentage improvement; it is a qualitative shift from ‘occasionally stumbles into an exploit’ to ‘systematically generates them at scale.’ The OSS-Fuzz results reinforce this: 595 crashes at the first two severity tiers and full control flow hijack on 10 targets suggest the model is not merely finding surface-level bugs but constructing deep exploitation chains.

The FreeBSD NFS exploit (CVE-2026-4747) illustrates what this looks like in practice. Mythos autonomously went from a text prompt to a full unauthenticated root-level remote code execution exploit using a 20-gadget ROP chain — a technique that typically requires days of manual effort from expert security researchers. The discovery of a 27-year-old TCP SACK bug in OpenBSD and a 16-year-old FFmpeg H.264 vulnerability that had been missed by fuzzers over 5 million times demonstrates that the model is not rediscovering known vulnerability classes but finding genuinely novel bugs that evaded both automated tools and human review for decades. As Anthropic’s red team report noted, this was a ‘16-year-old vulnerability dating to 2003, undetected by every fuzzer and human who has reviewed the code.’