Mythos Was the Forcing Function, Not the CAISI Strategy

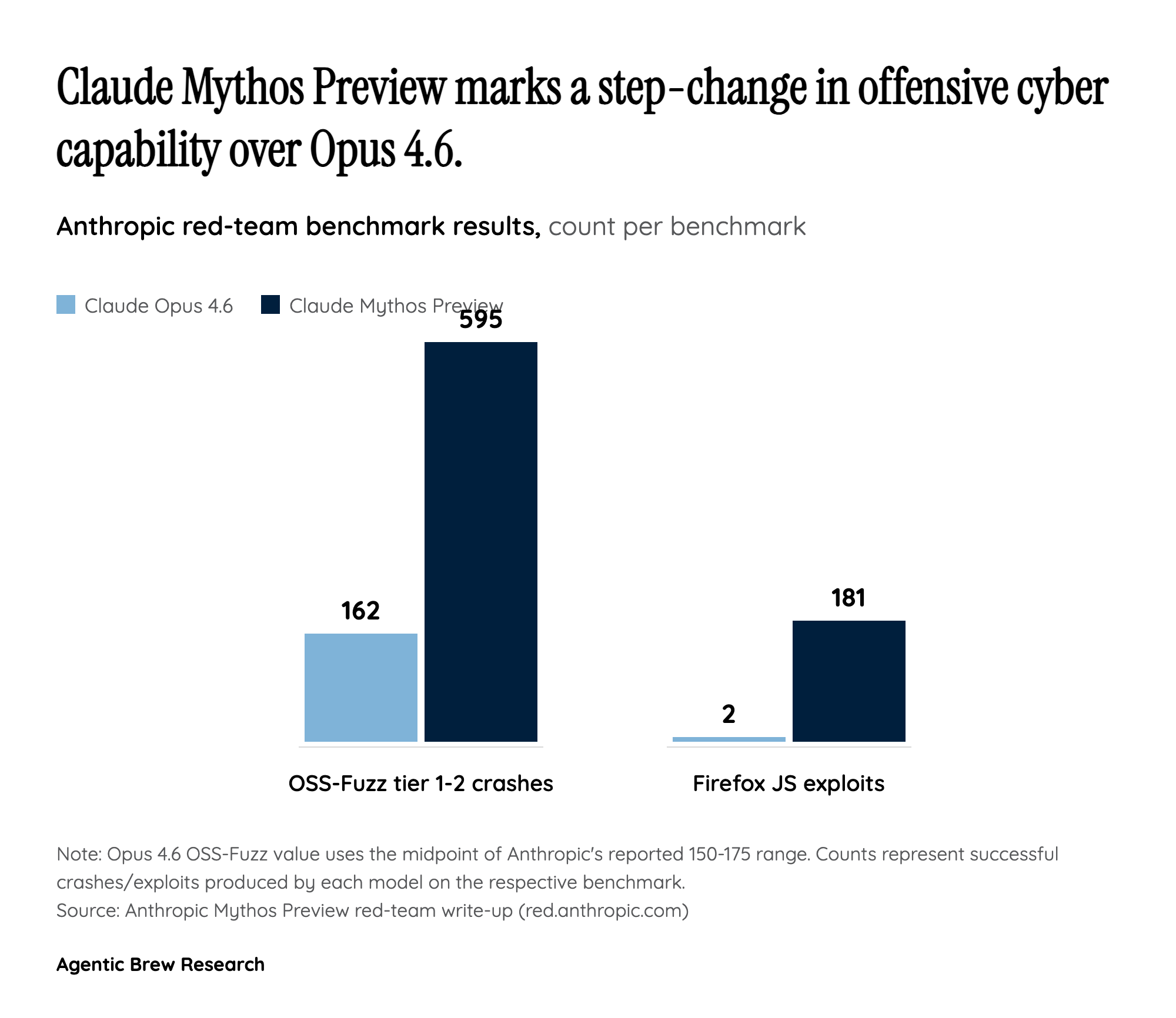

The administration did not arrive at pre-release AI vetting through deliberation - it arrived there because Anthropic refused to ship a model. Claude Mythos Preview produced 595 OSS-Fuzz crashes at severity tiers 1-2 (versus 150-175 for Opus 4.6) and landed 181 successful Firefox JavaScript exploits where Opus 4.6 managed two. Anthropic's own red-team noted Mythos wrote exploits in hours that expert penetration testers said would have taken weeks. That asymmetry collapsed the gap between vulnerability discovery and exploitation, and Anthropic responded by restricting distribution to roughly 40-50 vetted partners under Project Glasswing rather than a public release.

Kevin Roose framed the move sharply: it is the first time a major AI lab has held back an announced model due to safety concerns since GPT-2. Andrew Curran's reporting captured the causality bluntly: 'Mythos has changed things.' Hassett's FDA-style proposal and the May 5 expanded MOUs with Google DeepMind, Microsoft, and xAI both arrive on the heels of that disclosure - which means the policy architecture is being reverse-engineered from a single dangerous capability demonstration rather than built from first principles.