The Model Anthropic Won't Sell You

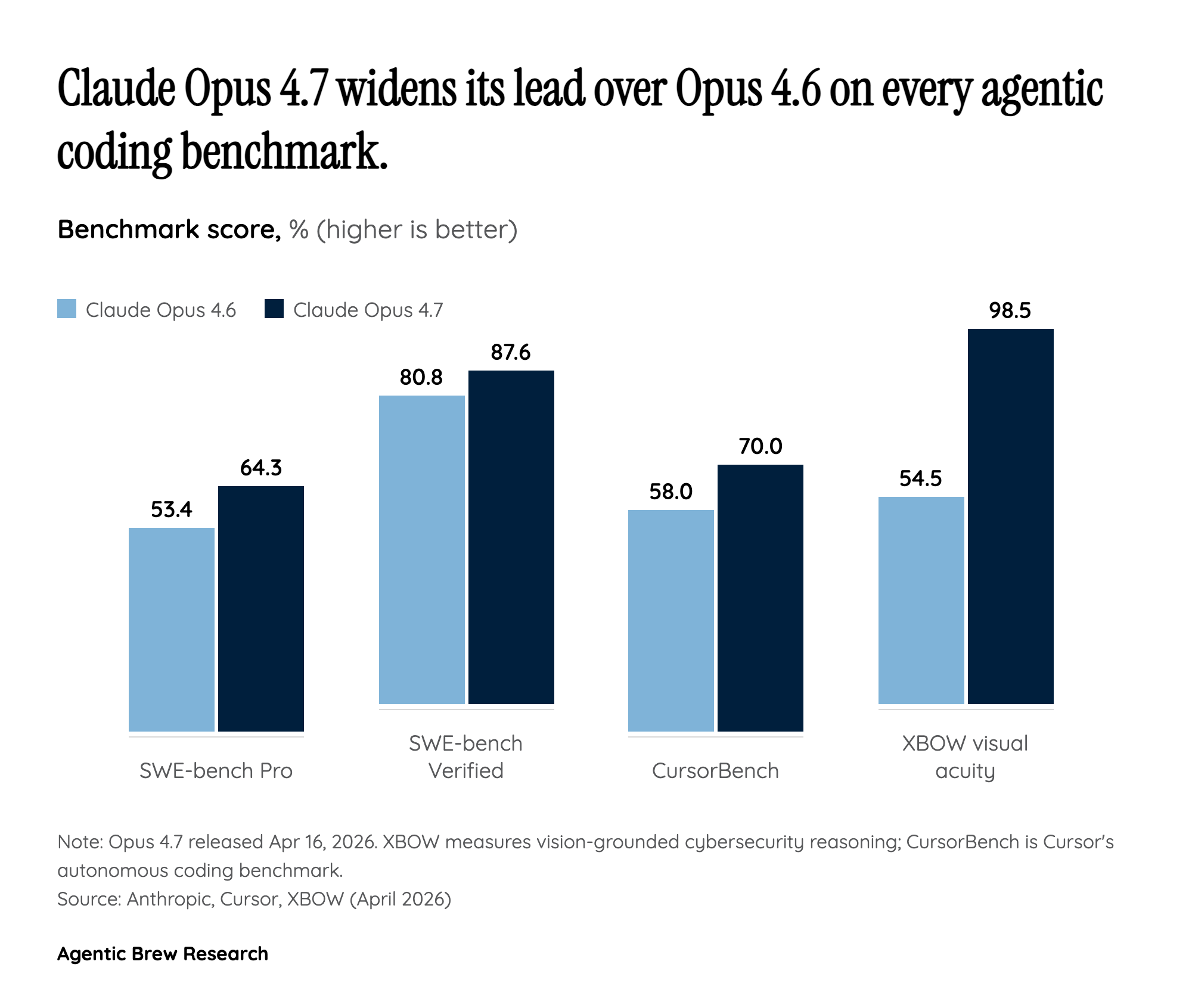

The strangest thing about Opus 4.7 is how insistently Anthropic refuses to call it the best model it has built. Every official surface — the product page, the news post, even partner announcements — reiterates that 4.7 trails Claude Mythos Preview, a more capable model that is not generally available. Mythos is restricted to a handpicked group of cybersecurity organizations under a separate program, Project Glasswing, and evaluators including the UK AI Security Institute have stress-tested its multi-step cyberattack behavior. The company's own line is that what it learns from 4.7's real-world safeguards will 'help us work towards our eventual goal of a broad release of Mythos-class models.'

That framing converts a routine point release into a product-strategy signal. Gizmodo's read — that the 4.7 launch functions as a promotion for Mythos — is sharp but incomplete. The deeper pattern is a two-tier enterprise architecture: a broadly available frontier tier that any developer can hit through Bedrock, Vertex AI, or Foundry, and a restricted safety-gated tier above it that enterprises will eventually buy access to, presumably at premium pricing and with compliance paperwork attached. Opus 4.7 is simultaneously a shipping product and a public beta for the safeguards that will gate Mythos's commercial rollout. Every request routed through 4.7's automated cyber-abuse detection is, in effect, training data for what Mythos's enterprise terms of service will look like.