The Rebrand Is a Tell: The AI Buyer Just Changed

Vertex AI was a 2021-vintage MLOps platform — a tool ML engineers chose to train, deploy, and monitor models. Gemini Enterprise Agent Platform is a 2026 control plane — a tool a CISO and a CFO must approve before a single agent reaches production. The pillar names give the game away: Build is one of four, and the other three (Scale, Govern, Optimize) are entirely about runtime risk and unit economics. Google is no longer selling to the data science org; it is selling to the people who say no to the data science org. Forrester's Devin Dickerson reads the change as roughly six parts taxonomy reorganization and four parts a chance to bolt on enterprise features, which is a polite way of saying the product surface had to be re-skinned to fit the new buyer.

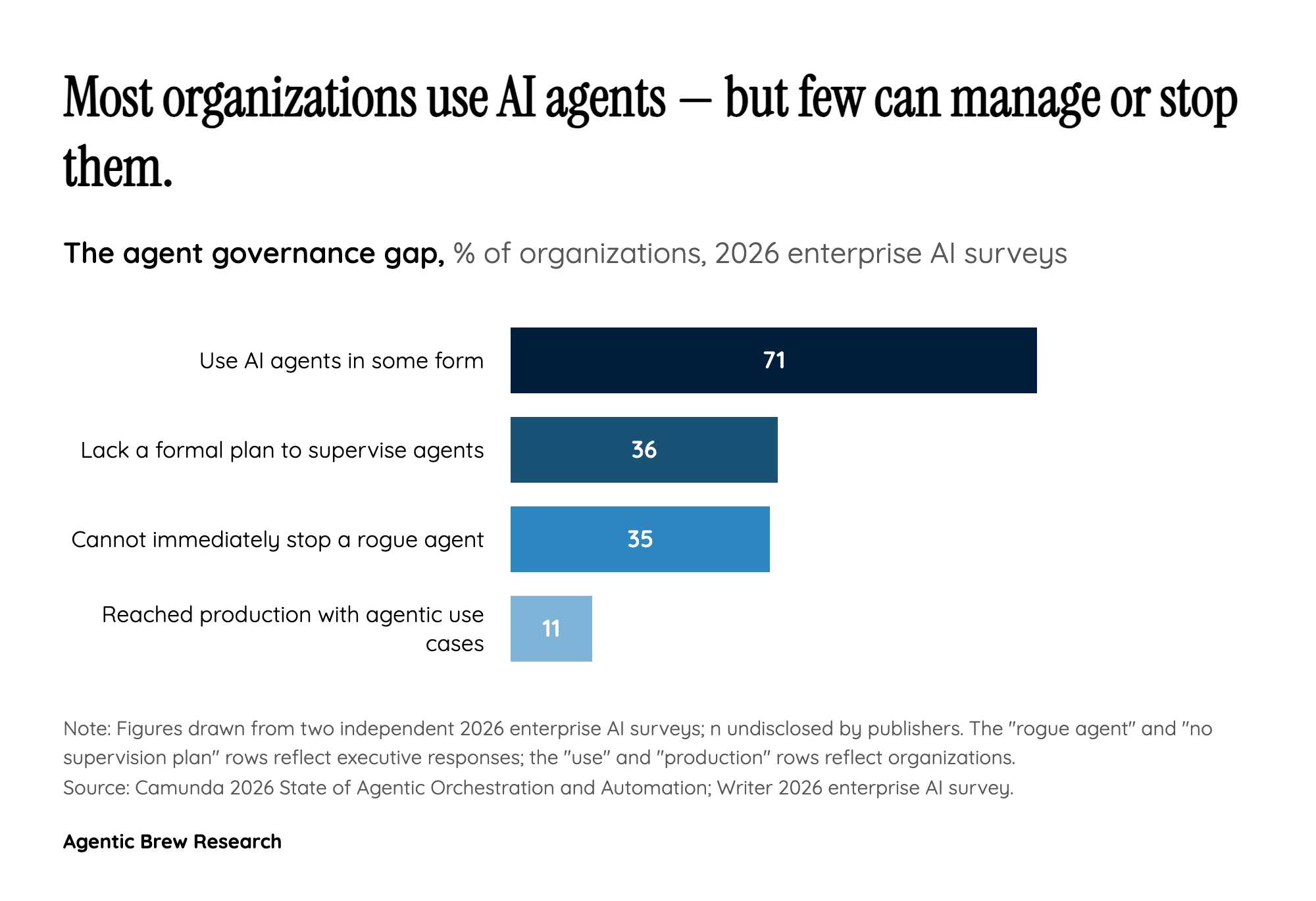

That reframing is also a quiet admission about what is blocking enterprise rollout. Independent surveys consistently show the same gap: 71% of organizations are using AI agents but only 11% reached production in the past year, 36% of executives have no formal plan for supervising agents, and 35% cannot immediately stop a rogue agent. Gartner now expects more than 40% of agentic AI projects to be canceled by the end of 2027. The platform's shape — Agent Identity issuing cryptographic IDs, Agent Gateway acting as 'air traffic control' with Model Armor protections, Agent Anomaly Detection flagging reverse shells and connections to bad IPs — is precisely the checklist a security review team writes when an experiment tries to ship. Google has effectively turned the governance objection list into a product.