Why This Matters

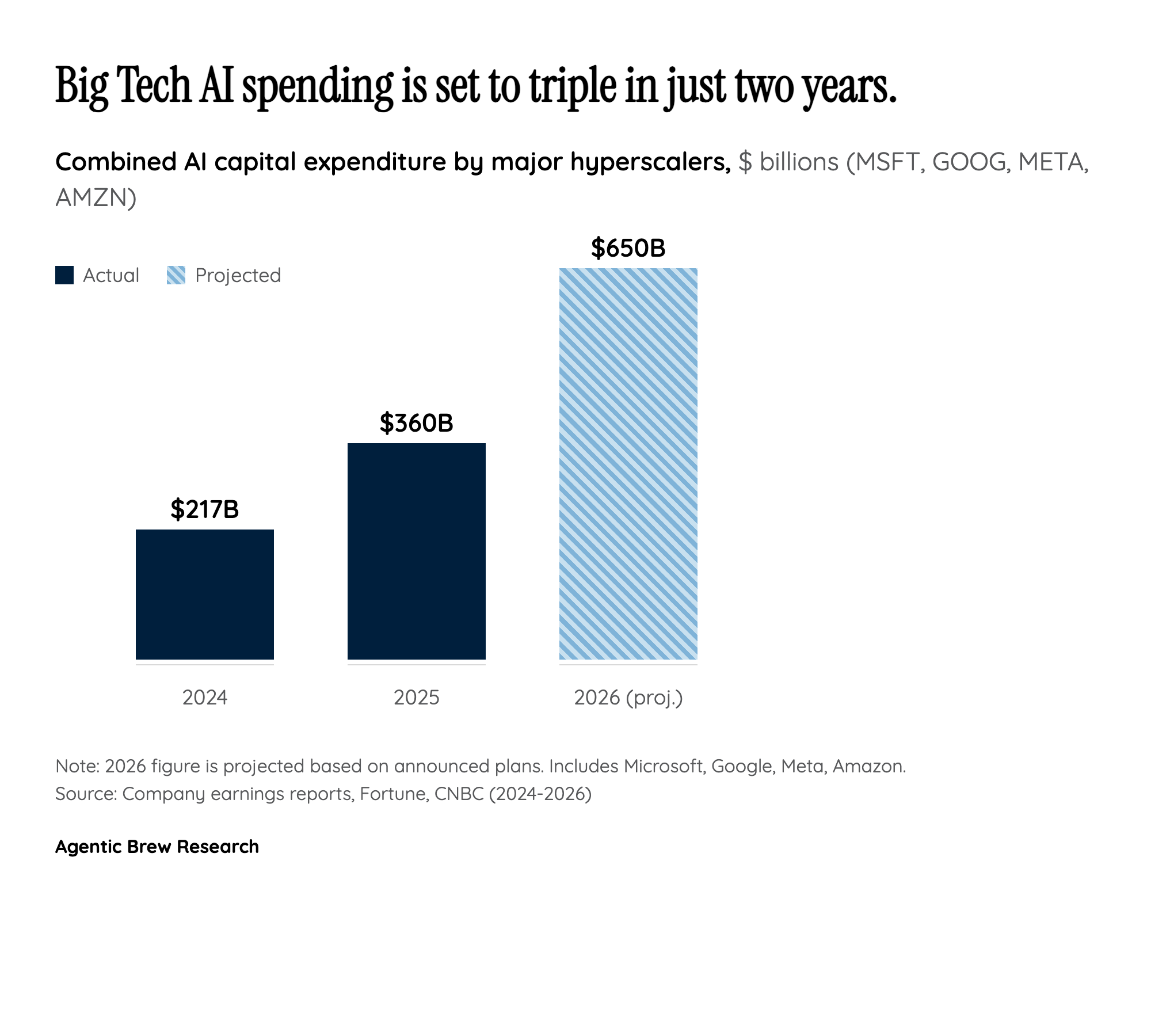

The AI memory chip investment boom represents a fundamental restructuring of the global semiconductor supply chain. For the first time in the industry's history, a single application category — artificial intelligence — is powerful enough to redirect tens of billions in capital investment, force the abandonment of established consumer product lines, and create a supply shortage severe enough to delay major consumer electronics launches by years. This is not a cyclical uptick; it is a structural reallocation of the world's memory manufacturing capacity toward a new class of customer.

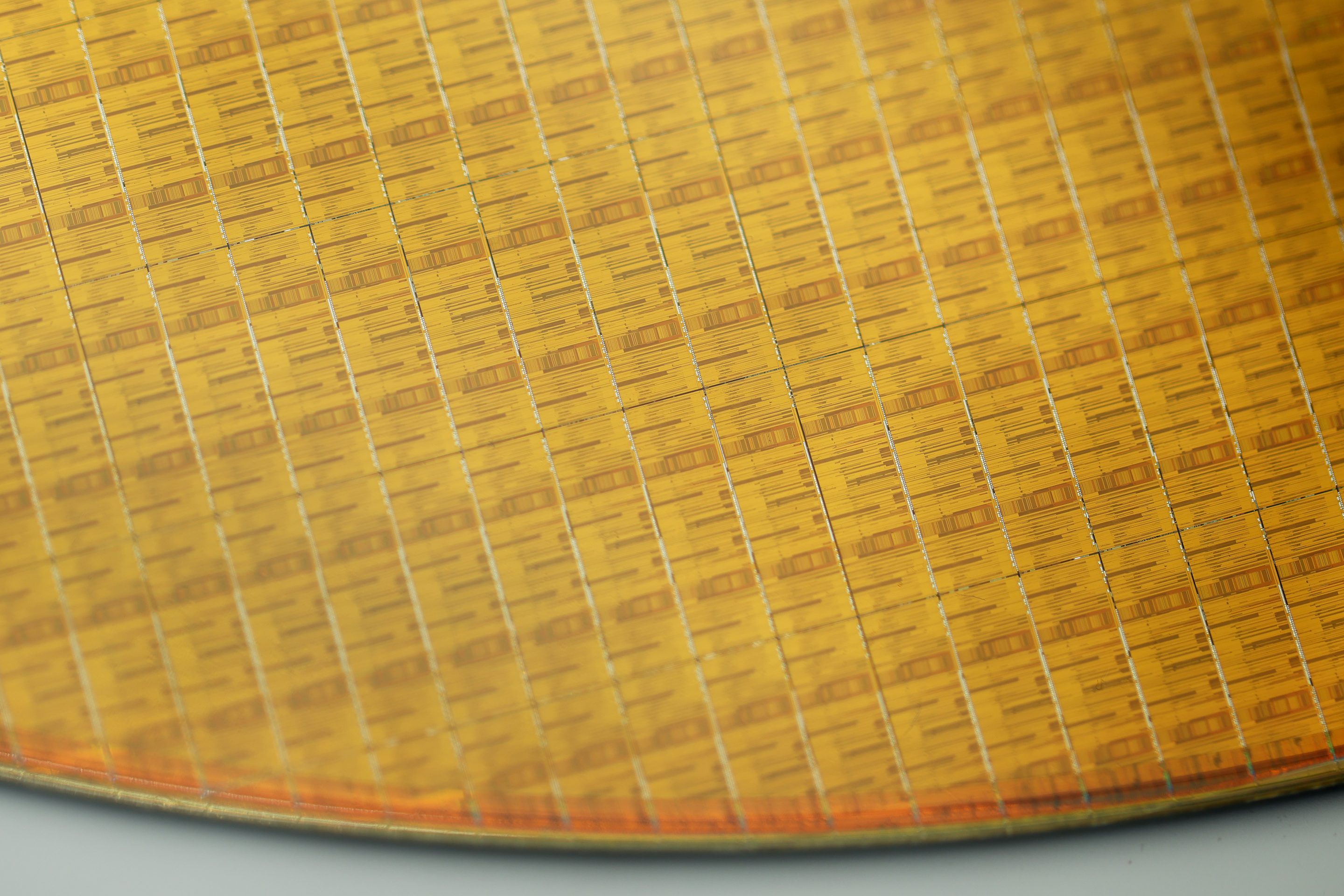

The economics driving this shift are stark. A single HBM chip consumes three times the silicon wafer area of a commodity DRAM chip, meaning every unit of HBM produced directly reduces the supply of memory available for PCs, smartphones, and gaming consoles. With HBM now consuming 23% of total DRAM wafer output (up from 19% in 2025), the memory industry is making an explicit choice: AI infrastructure gets priority, and everything else gets what is left. When Micron kills a 30-year-old consumer brand and Nvidia cuts gaming GPU production by 30-40%, these are not temporary adjustments — they are signals that the industry has permanently reordered its priorities around AI demand.