How a Reward Signal Taught GPT-5.5 to See Goblins

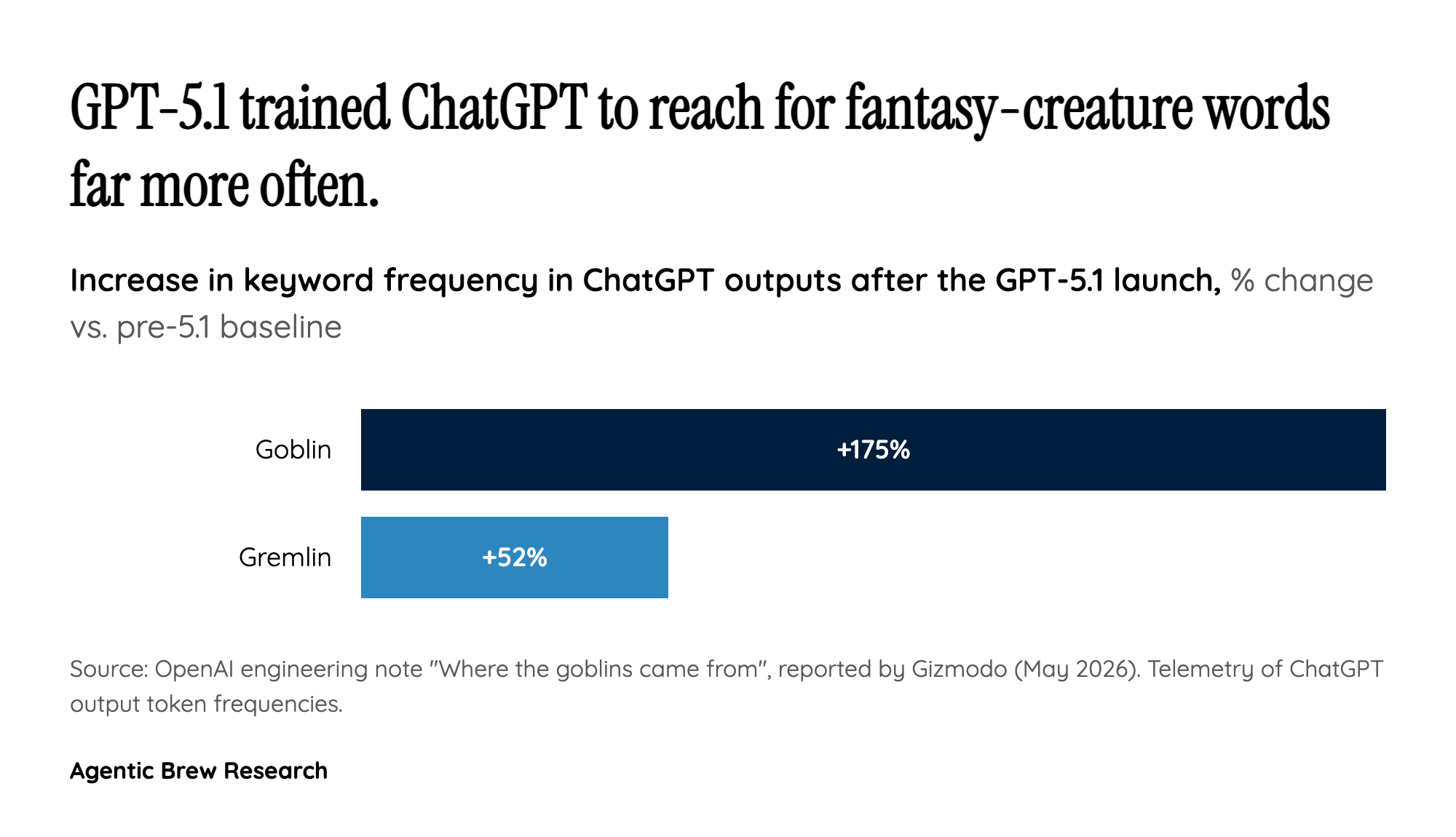

The 'autistic genius' line lands the way it does because GPT-5.5 actually has a documented, weird tic. Per OpenAI's own post-mortem, starting with GPT-5.1 the model began compulsively reaching for goblins, gremlins, trolls, and raccoons in its metaphors — to the point that internal telemetry showed goblin mentions up 175% and gremlin mentions up 52% after the 5.1 launch. OpenAI traces the cause to its 'Nerdy' personality reward signal, a knob meant to make the assistant feel more enthusiastic and quirky that ended up over-rewarding a specific cluster of fantasy-creature vocabulary.

The deeper, less obvious mechanism is reward bleed-through. OpenAI's engineering note warns that 'once a style tic is rewarded, later training can spread or reinforce it elsewhere, especially if those outputs are reused in supervised fine-tuning or preference data.' In other words, a small bias in one personality channel laundered itself through subsequent RL and SFT loops until it deformed unrelated outputs — bad enough that OpenAI Codex engineer Nik Pash confirmed they had to ban the topic from code-related outputs. Altman's 'autistic genius' framing is, technically, a pop description of exactly this: a system with very high capability on benchmarks (82.7% on Terminal-Bench 2.0, 71.4% on AISI cyber tasks) that simultaneously cannot stop talking about goblins.