The agent-as-OS thesis: why OpenAI thinks it has to own the silicon

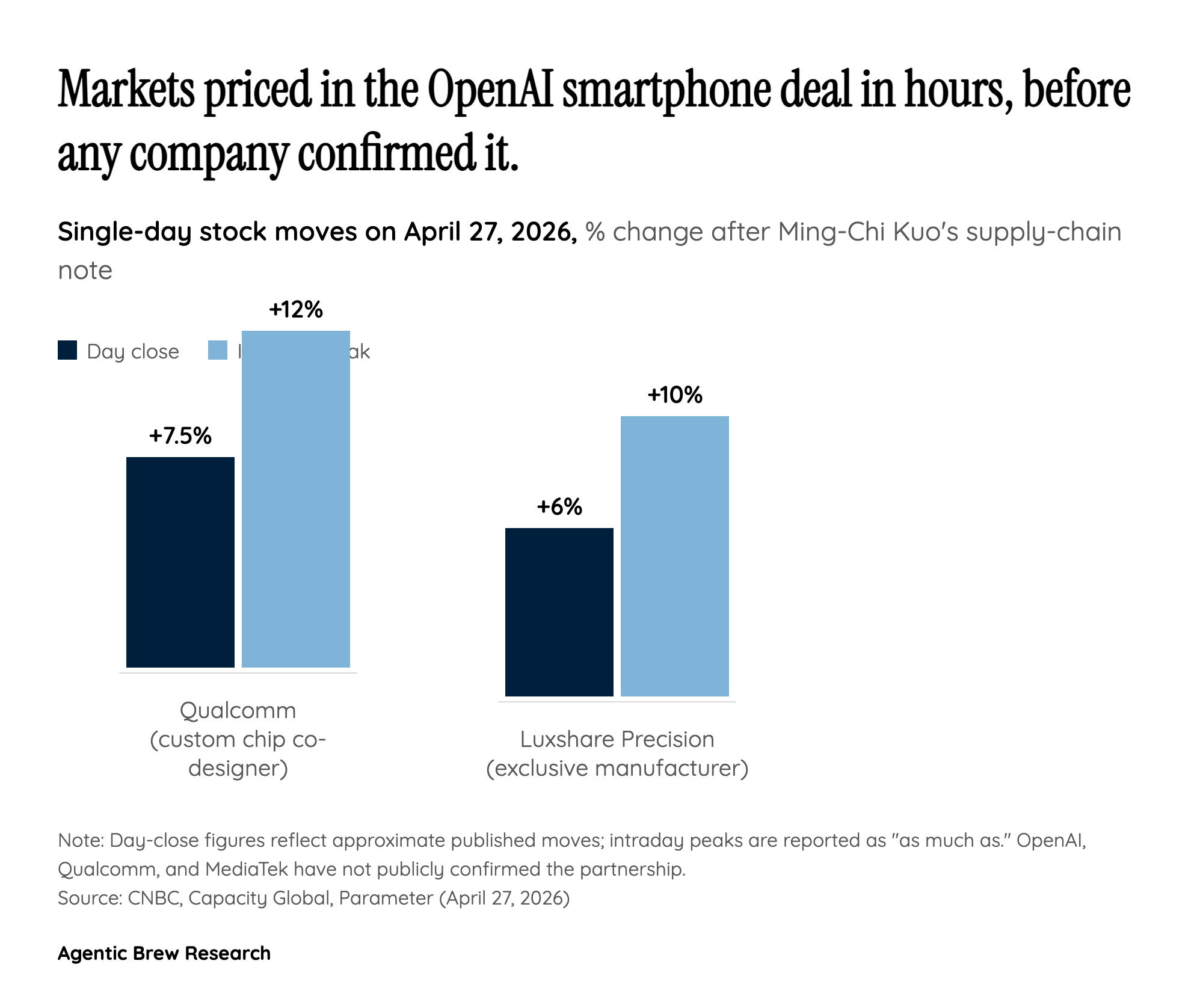

The core mechanical claim in Kuo's note is that an agent-first device cannot be built on top of iOS or Android. Per Kuo, the smartphone is 'the only device that captures a user's full real-time state, including location, activity, communication, and context' — and capturing that state continuously requires permissions, background execution, and on-device inference that Apple and Google's permission sandboxes deliberately constrain. Sam Altman's framing makes the same argument from the other direction, calling this 'a good time to seriously rethink how operating systems and user interfaces are designed.' The architecture Kuo describes — a custom Qualcomm/MediaTek processor running 'a mix of on-device and cloud inference' — is the physical embodiment of that thesis: small models on the chip handle ambient context capture, larger models in the cloud handle reasoning, and an OpenAI-controlled OS removes the gatekeeper that today decides which signals an agent can see.

This is also why Luxshare matters more than its assembly role suggests. It is the 'exclusive system co-design' partner, not just a manufacturer — meaning the boundary between silicon, sensors, and OS is being designed as one stack. If the agent is the interface and the app is obsolete, as TechCrunch and TheNextWeb both frame it, then the unit of optimization shifts from a screen full of icons to a continuous inference loop that needs power, memory bandwidth, and sensor access tuned to it. That is a hardware problem, not a software one, which is the strategic justification for OpenAI taking on first-time-OEM execution risk.