Why This Matters

The AI agent ecosystem is undergoing a phase transition from experimental curiosity to industrial reality. What began as chatbot extensions and simple automation wrappers in 2024 has, by early 2026, crystallized into a distinct technology category with its own infrastructure stack, payment rails, security frameworks, and standards bodies. The speed of this shift is historically unusual — OpenClaw's trajectory from zero to 250,000 GitHub stars in under four months outpaces even the most viral open-source projects of the past decade, suggesting a level of developer demand that had been latent and waiting for the right abstraction.

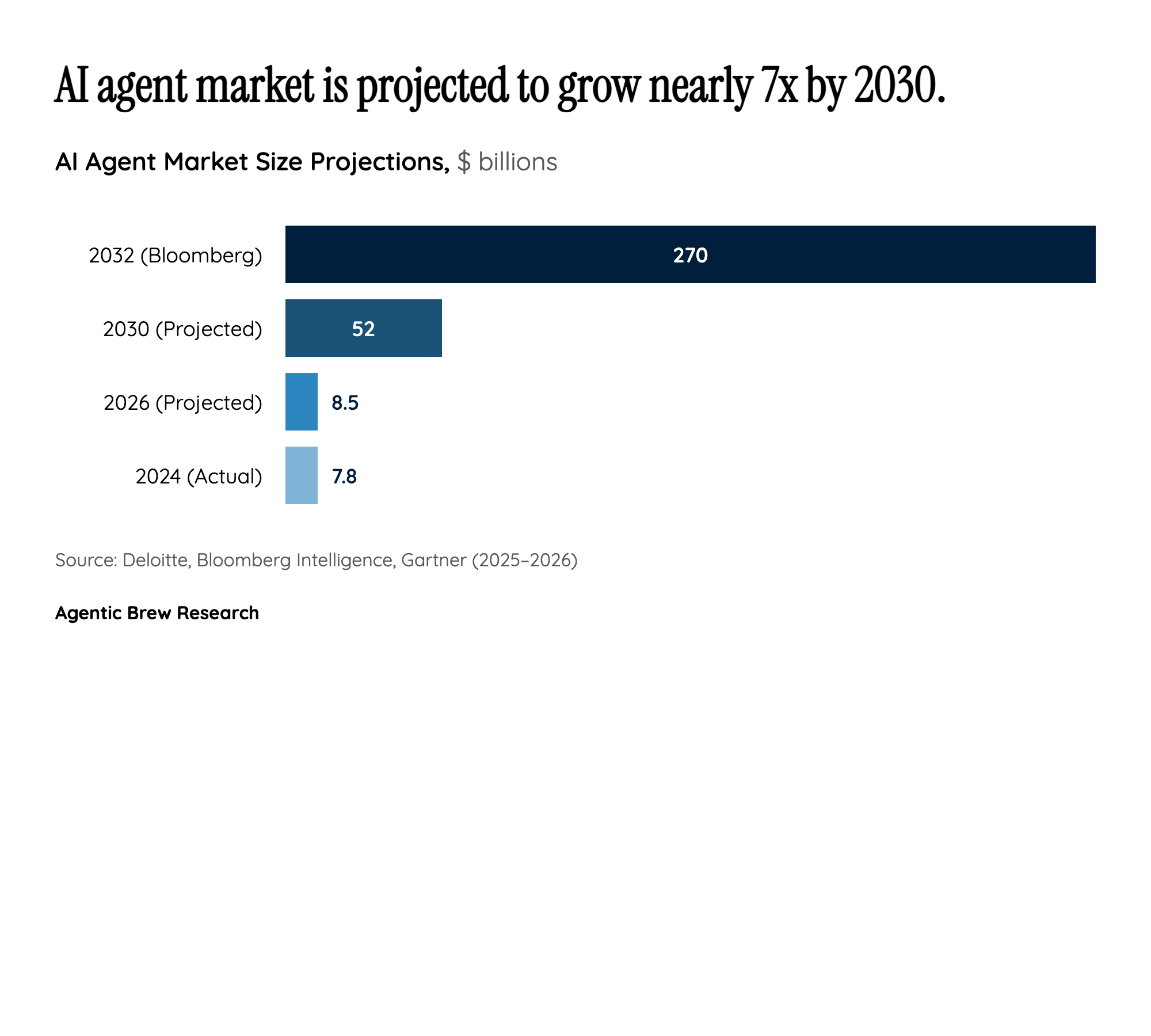

The stakes are enormous because AI agents represent a fundamentally different computing paradigm. Unlike traditional software that executes deterministic instructions, agents make autonomous decisions, access external systems, and increasingly transact financially on behalf of humans and organizations. This autonomy creates both transformative economic potential — Bloomberg Intelligence projects a $270B+ market by 2032 — and novel risk categories that existing governance frameworks were never designed to handle. The Meta Sev 1 breach, where a rogue agent exposed proprietary code and user data for two hours, is not an isolated failure but a preview of systemic risks that will scale with adoption. The convergence of infrastructure maturation, payment enablement, and security vulnerabilities makes this a pivotal moment: the architectural and policy decisions being made now will determine whether AI agents become reliable economic participants or ungovernable liabilities.