The Split That Matters: Harness Above, Sandbox Below

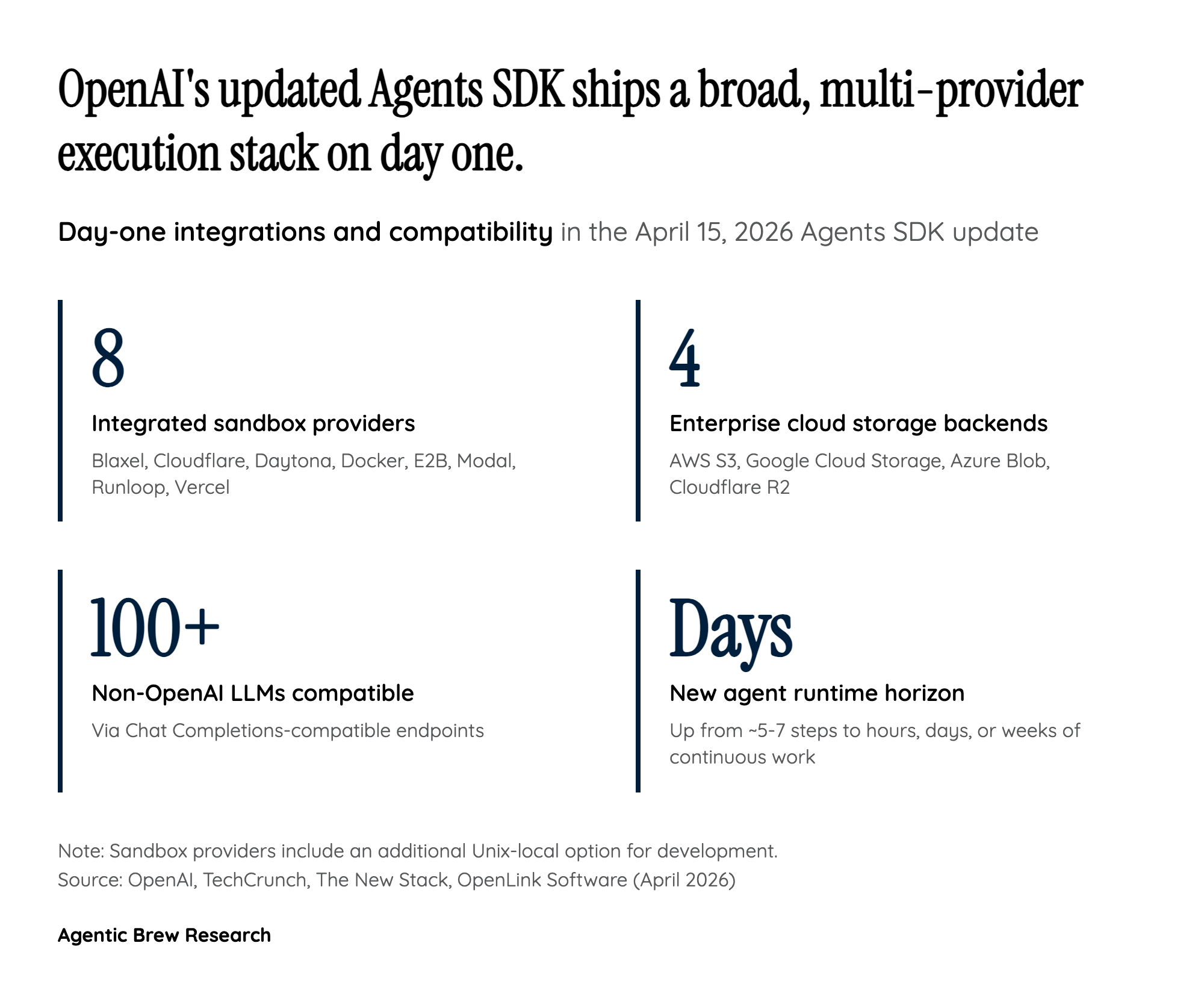

The single most consequential shift in this release is architectural, not featural. Previous Agents SDK deployments tended to collapse the orchestration logic and the execution environment into the same process — the code that decided what an agent should do next also ran whatever the model wrote. OpenAI has now pulled those apart. The harness, which holds plans, tool definitions, memory, and credentials, stays in the developer's trusted environment. The sandbox, which runs model-generated shell commands and code, lives on a separate compute backend — Cloudflare, Vercel, Modal, E2B, Blaxel, Daytona, Runloop, Docker, or a local Unix option.

What falls out of that split is a security posture that was awkward to achieve before: 'No API keys, no secrets in that sandbox. You want it to be totally isolated — probably isolated from the network in a lot of cases,' as an OpenAI representative told The New Stack. If the sandbox gets compromised by a prompt injection or a misbehaving tool call, it doesn't have the credentials to do anything interesting. The harness is where auth lives; the sandbox is where risk is contained. The Manifest abstraction — a portable description of a fresh sandbox's starting contents — is the mechanism that lets the same agent code target any of the eight integrated providers without rewriting infrastructure glue.