AI Stops Asking Permission

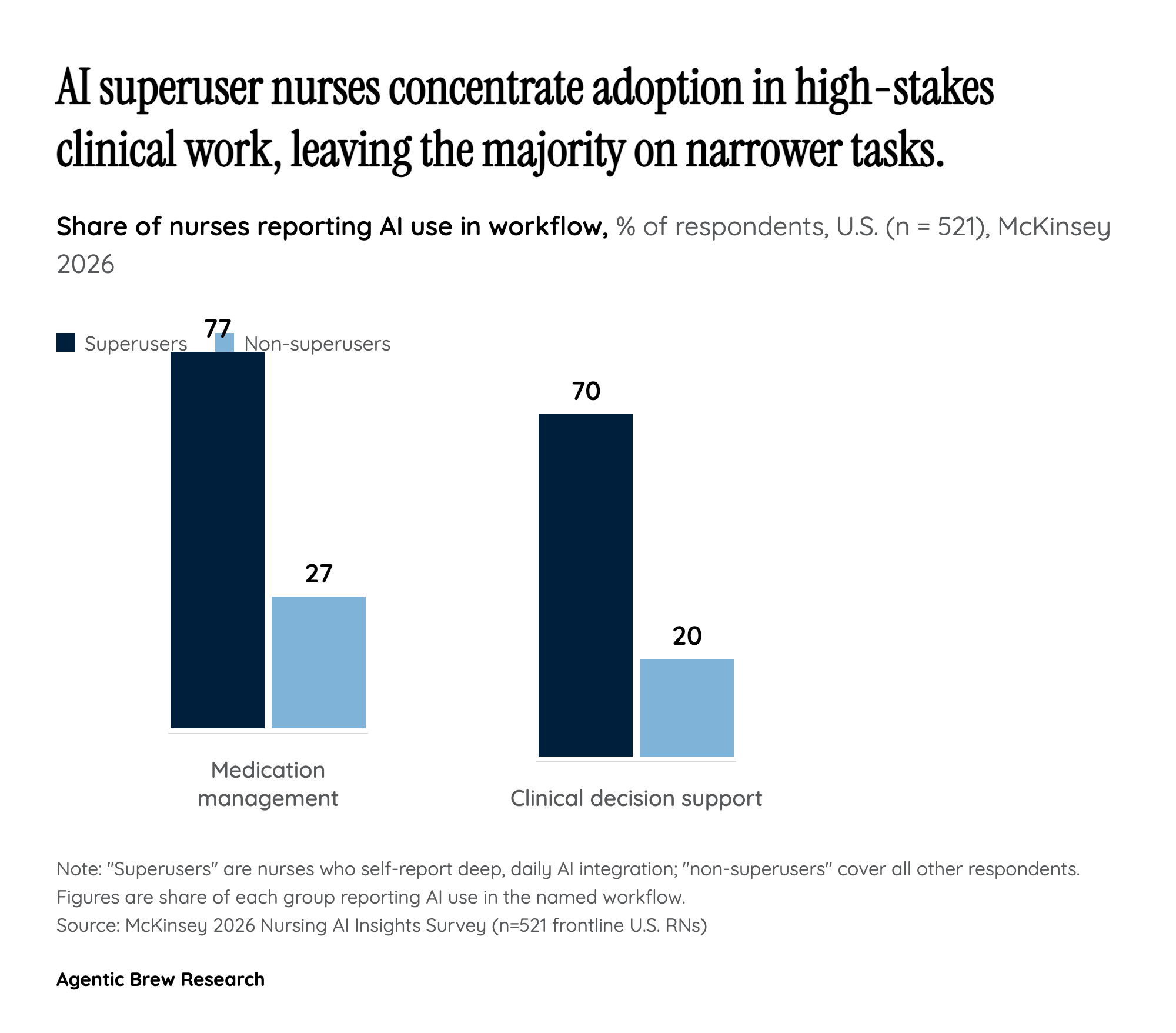

The three stories in this cluster look unrelated until you line them up by who initiated the install. A 4GB Gemini Nano model lands in Chrome user profiles without an install prompt. AI-integrated sentry towers go up along the U.S.-Mexico border because Congress earmarked the money, not because end users requested them. Roughly 65% of frontline nurses report using more AI tools than a year ago, often inside electronic health record systems they have no power to swap out. In each case, the human who interacts with the AI is no longer the one making the adoption decision. The browser vendor, the procurement officer, and the hospital IT department are.

That is what makes the trend more than a sum of headlines. The conventional framing of an 'AI rollout' assumes a product launch, a download page, a new SKU. The 2026 pattern is different: AI is being shipped through pre-existing channels — a browser auto-update, a DHS contract vehicle, a Cerner or Epic module — to populations that did not negotiate for it. The branding of these features as user-empowering (faster scam detection, better border safety, less nurse burnout) is downstream of a more banal fact: once AI is cheap enough and cloud-optional enough to ride on infrastructure people already use, infrastructure owners will install it by default and let opt-out, not opt-in, become the user's job.