The One-Day Gap Was Not a Coincidence — It Was Compute Physics

The most revealing detail in this whole reshuffle is the calendar. Microsoft and OpenAI announced their restructured partnership on April 27, 2026. Less than 24 hours later, OpenAI's models were live on Amazon Bedrock. That is not negotiation theater; that is a deal that had been engineered for months and was waiting for a clock to expire.

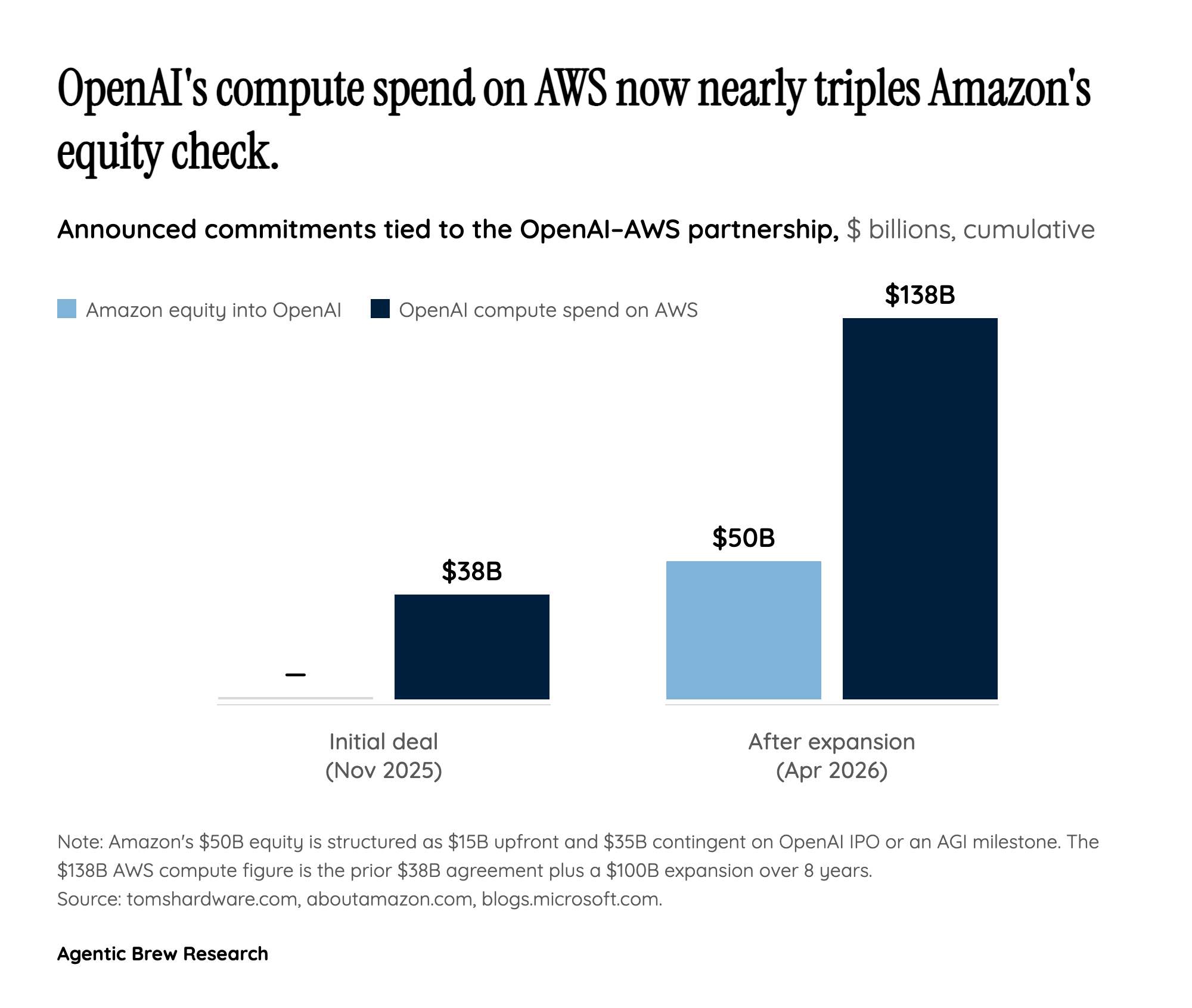

Why did the clock have to expire? Because the underlying constraint was never legal — it was physical. OpenAI has been signaling unrelenting demand for compute, with Sam Altman saying customers are asking for more capacity 'no matter what the price is.' Against that backdrop, OpenAI signed a $38B compute deal with AWS in November 2025, and expanded it by another $100B over eight years in 2026. Add Amazon's separate $50B equity investment and a 2-gigawatt Trainium commitment, and the math gets unambiguous: Azure could not, on its own, rack GPUs fast enough to absorb OpenAI's curve. On Reddit, the dominant frame in r/singularity and r/OpenAI was exactly this — that cloud exclusivity flipped because the compute commitments outran what any single hyperscaler could ship, with one r/OpenAI commenter claiming OpenAI was already routing AWS work as a stateful service to skirt the old contract.

In that read, the Microsoft restructure on April 27 was not Microsoft losing leverage — it was both parties acknowledging that the old contract had become unenforceable in practice, and replacing it with terms that matched physical reality. The April 28 Bedrock launch is what reality looks like once the paperwork catches up.