The Quiet Coup: How Prompt Engineering Lost Its Throne

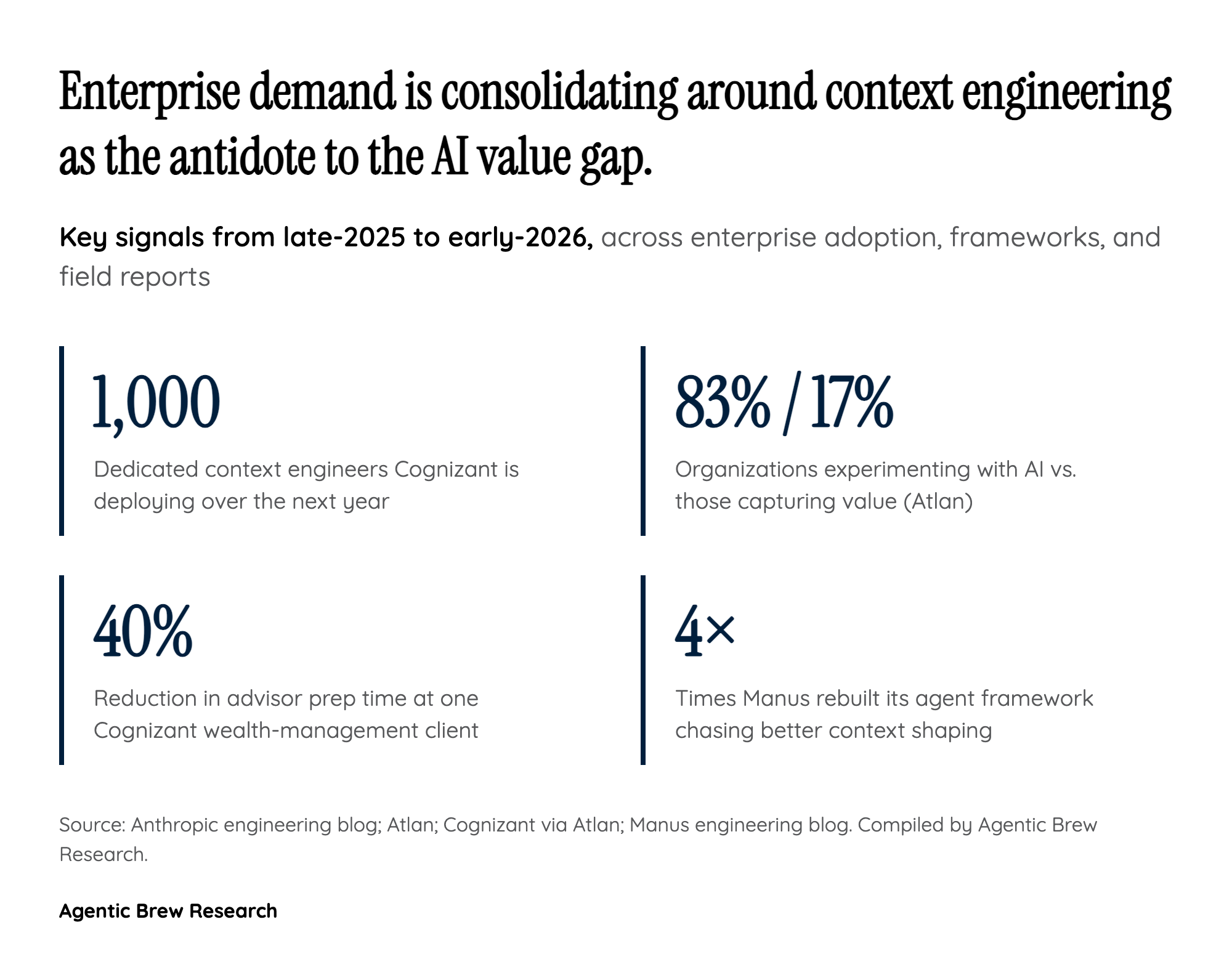

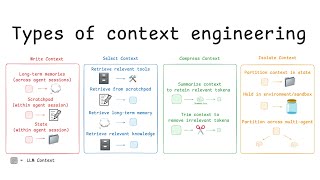

For three years after ChatGPT's launch, prompt engineering was the named profession of the LLM era — a discipline of phrasing, role-play, and clever instructions aimed at a single inference call. The shift announced by Anthropic's Applied AI team in late September 2025 reframes that work as a special case of something larger. Their definition is deliberately mechanical: context is 'the set of tokens included when sampling from a large-language model,' and the engineer's job is to find 'the smallest possible set of high-signal tokens that maximize the likelihood of desired outcome.' Prompts are still in there. They are just no longer the unit of work.

The mechanism behind the displacement is that context is finite, attention is quadratic, and long contexts decay. Anthropic notes that LLMs 'like humans, lose focus or experience confusion at a certain point,' and Elastic's measurements show accuracy degrading around 32,000 tokens for some models — a phenomenon now widely called context rot. Once you accept that more context can hurt, prompt engineering's instinct to stuff the window with examples and instructions becomes actively harmful. Philipp Schmid's now-quoted line — 'most agent failures are not model failures anymore, they are context failures' — is the field's clearest statement of why the throne changed hands. The model is no longer the bottleneck; what you put in front of it is.