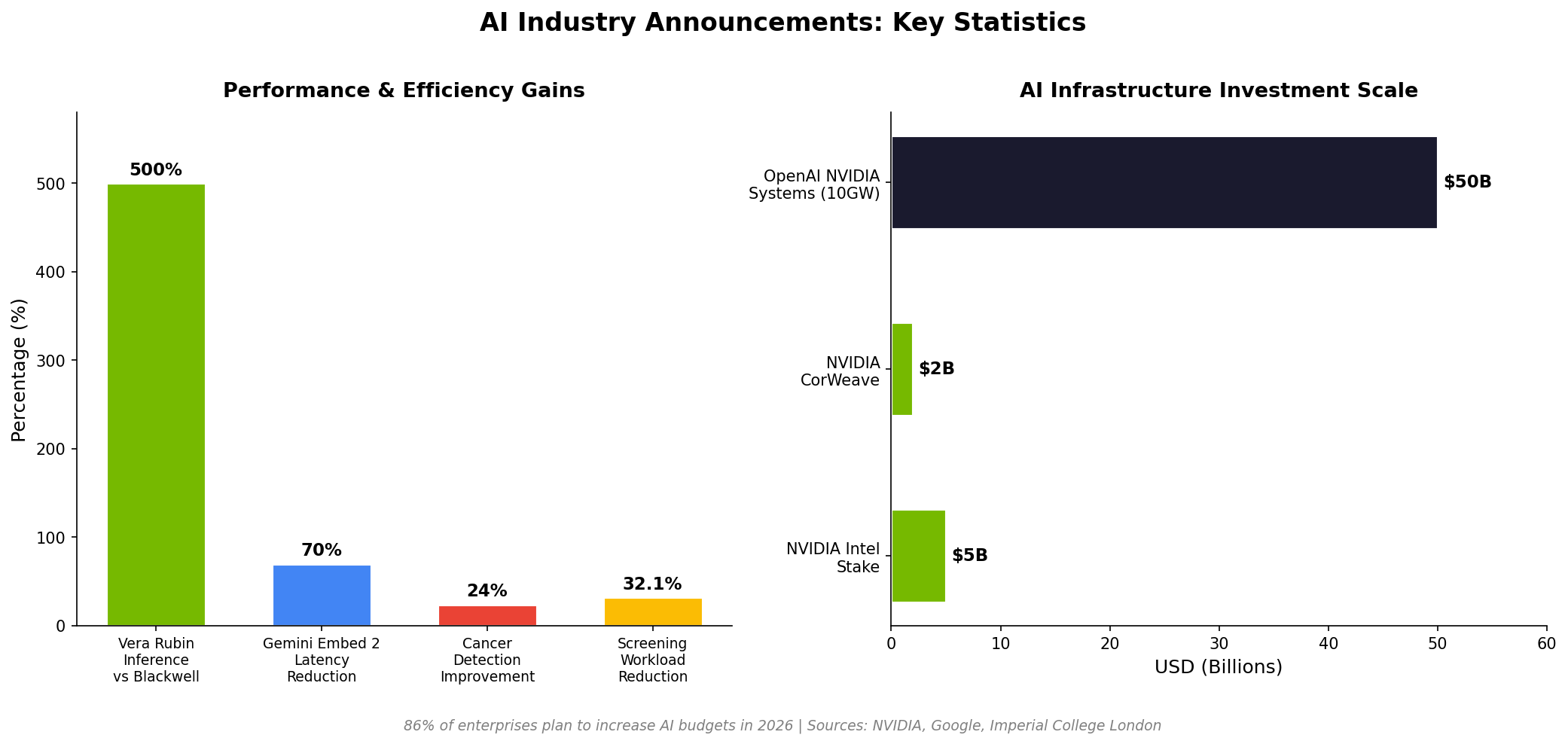

Why This Matters

The convergence of announcements from NVIDIA and Google in early 2026 marks a pivotal inflection point in the AI industry. These are not incremental updates but structural shifts that redefine how AI infrastructure is built, deployed, and applied. Jensen Huang's five-layer cake framework, presented at the World Economic Forum in Davos, signals that AI has moved beyond a software-only paradigm into a full-stack industrial challenge spanning energy production, semiconductor manufacturing, data center infrastructure, foundation model training, and application deployment.

This matters because it fundamentally changes who participates in the AI economy and how value is distributed. When 86% of enterprises report plans to increase AI budgets in 2026, they are not simply buying software licenses; they are investing across every layer of this stack. The OpenAI-NVIDIA partnership for 10 gigawatts of compute systems illustrates the unprecedented energy demands. For context, 10 gigawatts exceeds the total electricity consumption of many mid-sized countries. Meanwhile, Google's healthcare AI breakthrough demonstrates that the downstream applications of this infrastructure investment can deliver measurable, life-saving outcomes at population scale.