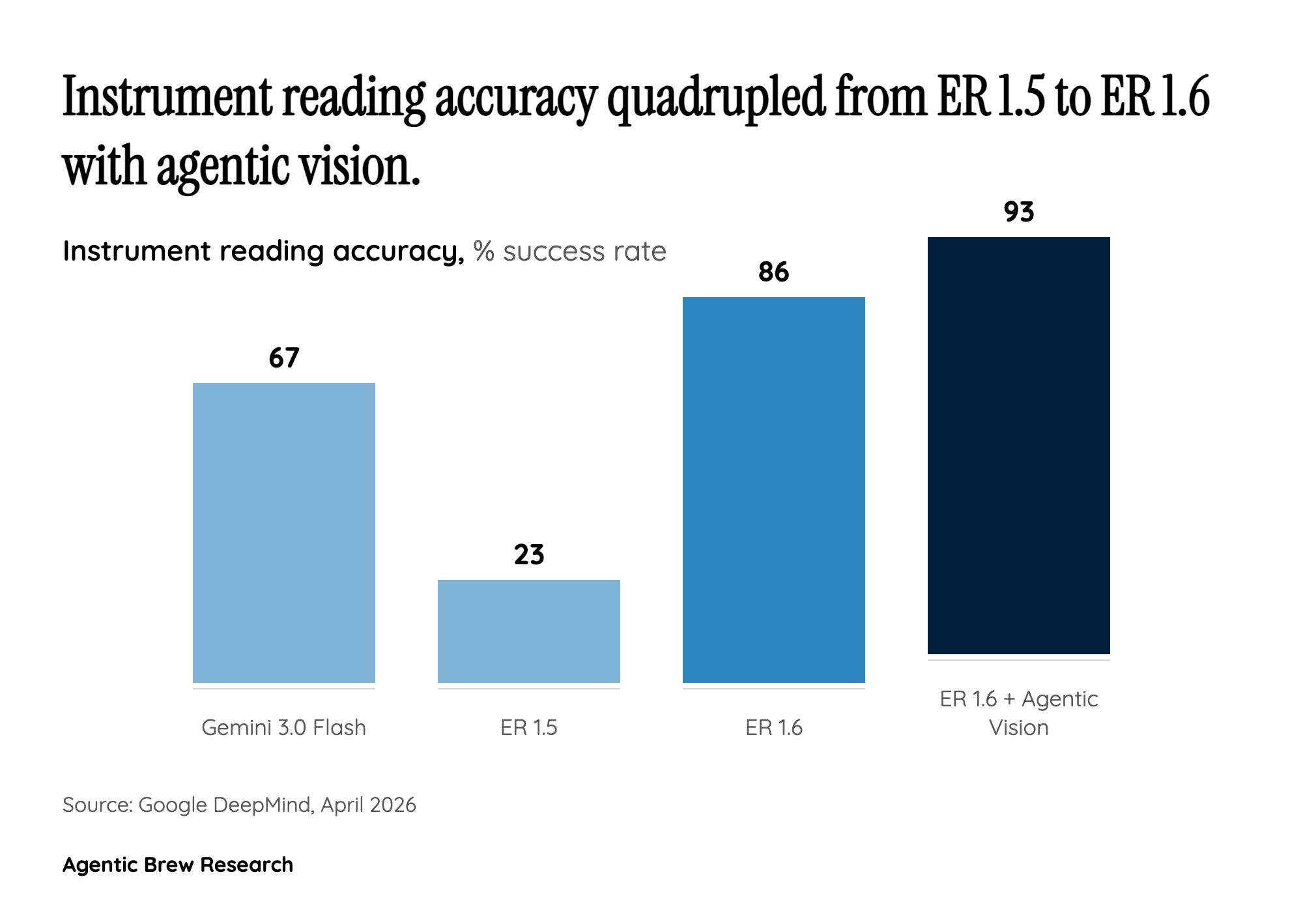

From 23% to 93%: Why the Instrument Reading Leap Changes the Industrial Calculus

The headline number in ER 1.6 is not incremental improvement — it is a categorical shift. Moving from 23% to 93% accuracy in reading analog gauges means the difference between a system that fails more often than it succeeds and one that rivals trained human inspectors. In industrial contexts like oil refineries, chemical plants, and power stations, analog gauges remain ubiquitous precisely because they are mechanically reliable and do not require power. The irony has been that the simplest instruments were the hardest for AI to read.

Marco da Silva's candid admission that "somewhere north of 80 percent is the threshold where it's not annoying" provides a rare quantitative insight into deployment psychology. Below that threshold, human operators spend more time correcting the robot than they save by deploying it. At 93%, ER 1.6 clears this bar with margin, which is critical because real-world conditions — dust, glare, vibration, partial occlusion — will degrade performance below lab benchmarks. The 86% accuracy without agentic vision versus 93% with it also reveals that the model's self-directed visual search strategy (choosing where and how to look) accounts for a meaningful portion of its capability, not just raw vision quality.