The Dual-Use Paradox: Why OpenAI Is Loosening Guardrails on Its Most Dangerous Model

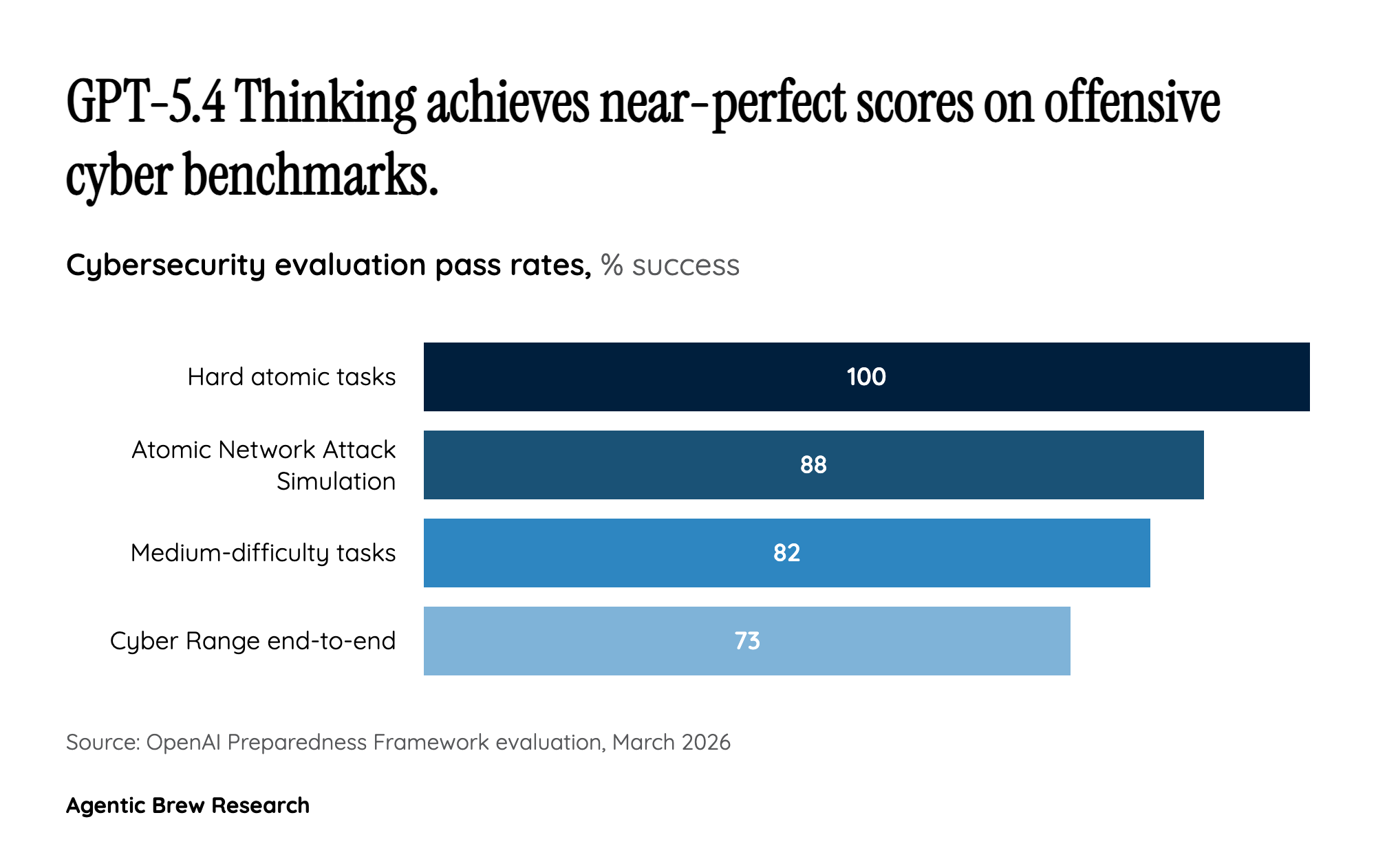

GPT-5.4 Thinking holds a distinction no other general-purpose AI model has earned: a 'high' cybersecurity risk classification, meaning it can automate attacks against hardened targets and discover operationally relevant vulnerabilities. The model achieved an 88% success rate in atomic Network Attack Simulation challenges, solved all five hard atomic tasks, and posted a 73.33% pass rate in end-to-end Cyber Range scenarios. By any measure, this is the most cyber-capable AI system publicly acknowledged.

Yet OpenAI's response to this classification was not to tighten restrictions but to create a derivative — GPT-5.4-Cyber — that is 'purposely fine-tuned for additional cyber capabilities and with fewer capability restrictions.' This is a calculated philosophical bet: that the asymmetry between attackers and defenders is so severe that withholding powerful tools from the defense side does more harm than the risk of misuse. OpenAI's three-pillar safeguard strategy — know-your-customer validation, iterative jailbreak-resistant deployment, and investment in software security — is essentially an argument that procedural controls can substitute for capability restrictions. Whether that bet pays off will likely define the regulatory conversation around AI cybersecurity for years to come.