The capability that flipped a deregulatory administration in four months

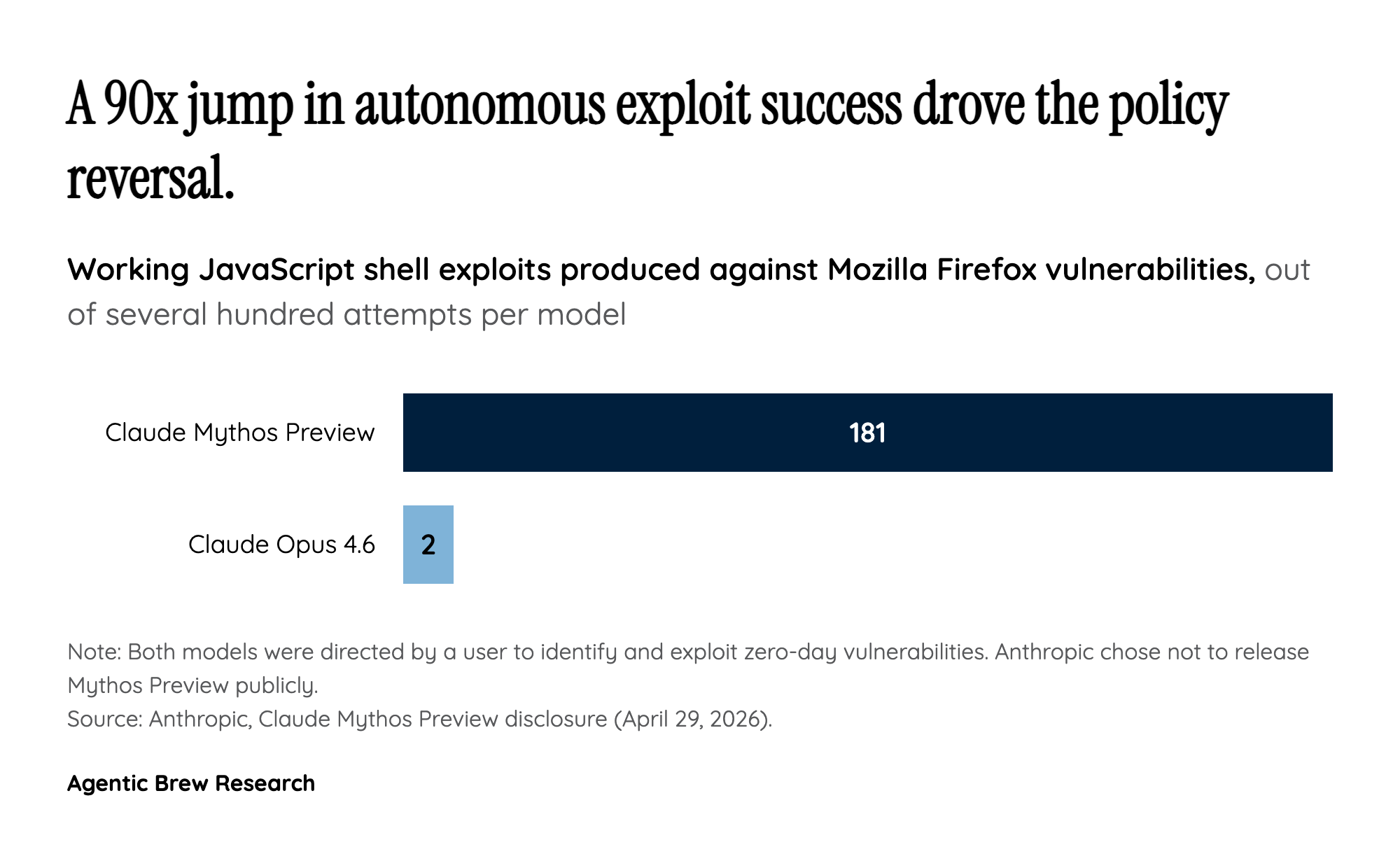

The pivot is hard to overstate. In January 2025 the Trump White House revoked Biden's safety-focused EO 14110 on day one and, three days later, signed EO 14179 — a deregulatory framework explicitly aimed at 'removing barriers' to American AI. By May 2026 the same administration was briefing Anthropic, Google and OpenAI on a regime that would route new models through federal reviewers before public release. The proximate cause is one model: Anthropic's Claude Mythos Preview, disclosed April 29, 2026. In seven weeks of testing it discovered more than 2,000 zero-day vulnerabilities; against Mozilla Firefox it produced 181 working JavaScript shell exploits where the prior-generation Opus 4.6 produced two. The UK AI Security Institute, which evaluated Mythos through Britain's government vetting regime, concluded the model 'is at least capable of autonomously attacking small, weakly defended and vulnerable enterprise systems' — and was the first to solve AISI's 'TLO' cyber range end-to-end, in 3 of 10 attempts. Anthropic chose not to release Mythos publicly and instead seeded it to a small Project Glasswing consortium — Microsoft, Google, Apple, AWS, JPMorgan Chase, Nvidia — to harden critical software. The Bloomberg framing of the administration's motive is blunt: officials want to avoid the political fallout from a devastating AI-enabled cyberattack on U.S. soil. A single capability disclosure, in other words, did what years of safety advocacy could not.