Why This Matters

The AI chip landscape is undergoing a structural shift that challenges the GPU-centric orthodoxy of the past decade. For years, the narrative was simple: GPUs dominated AI training and inference, with Nvidia commanding roughly 86% of the AI GPU market. But the rise of agentic AI — systems that autonomously use tools, browse the web, write code, and chain multi-step reasoning — is fundamentally changing where compute bottlenecks occur. Research from Georgia Tech and Intel found that CPU-side tool processing accounts for up to 90.6% of total latency in agentic workloads, meaning the GPU is idle most of the time while the CPU handles orchestration, API calls, and data marshaling.

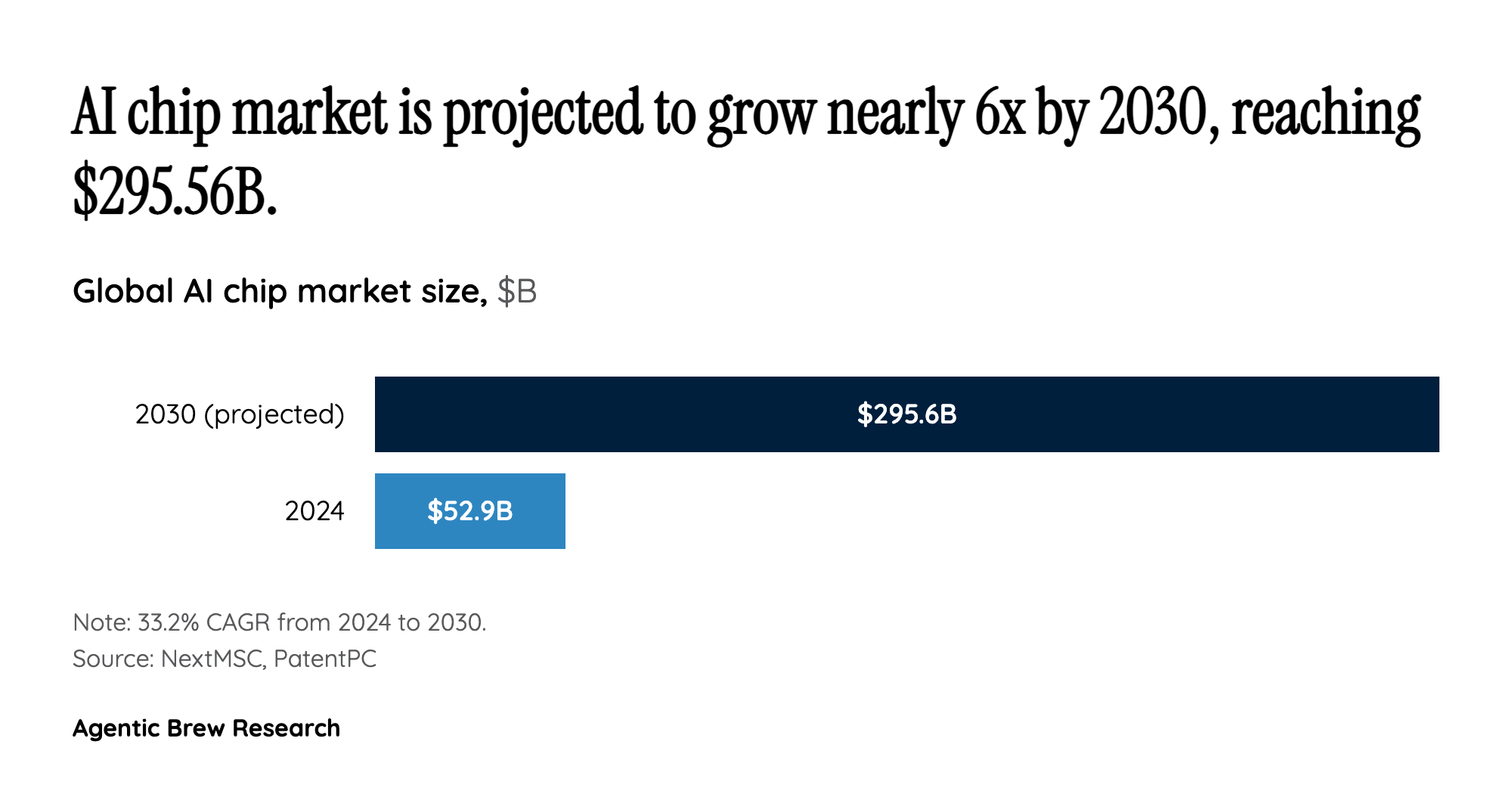

This shift has profound implications for data center economics. Arm projects that CPU-to-GPU ratios in agentic data centers will climb to approximately 7:1, a dramatic inversion from the GPU-heavy ratios seen in training-optimized facilities. Both Nvidia and Arm have responded by placing CPUs at the center of their newest platform strategies — Nvidia with the 88-core Vera CPU in the Vera Rubin platform, and Arm with its historic first silicon product, the 136-core AGI CPU. The $52.92 billion AI chip market (projected to reach $295.56B by 2030) is no longer a one-architecture race; it is fragmenting into specialized lanes where CPUs, GPUs, TPUs, NPUs, and LPUs each serve distinct roles.