The 2x Price Hike That Looks Like 20%

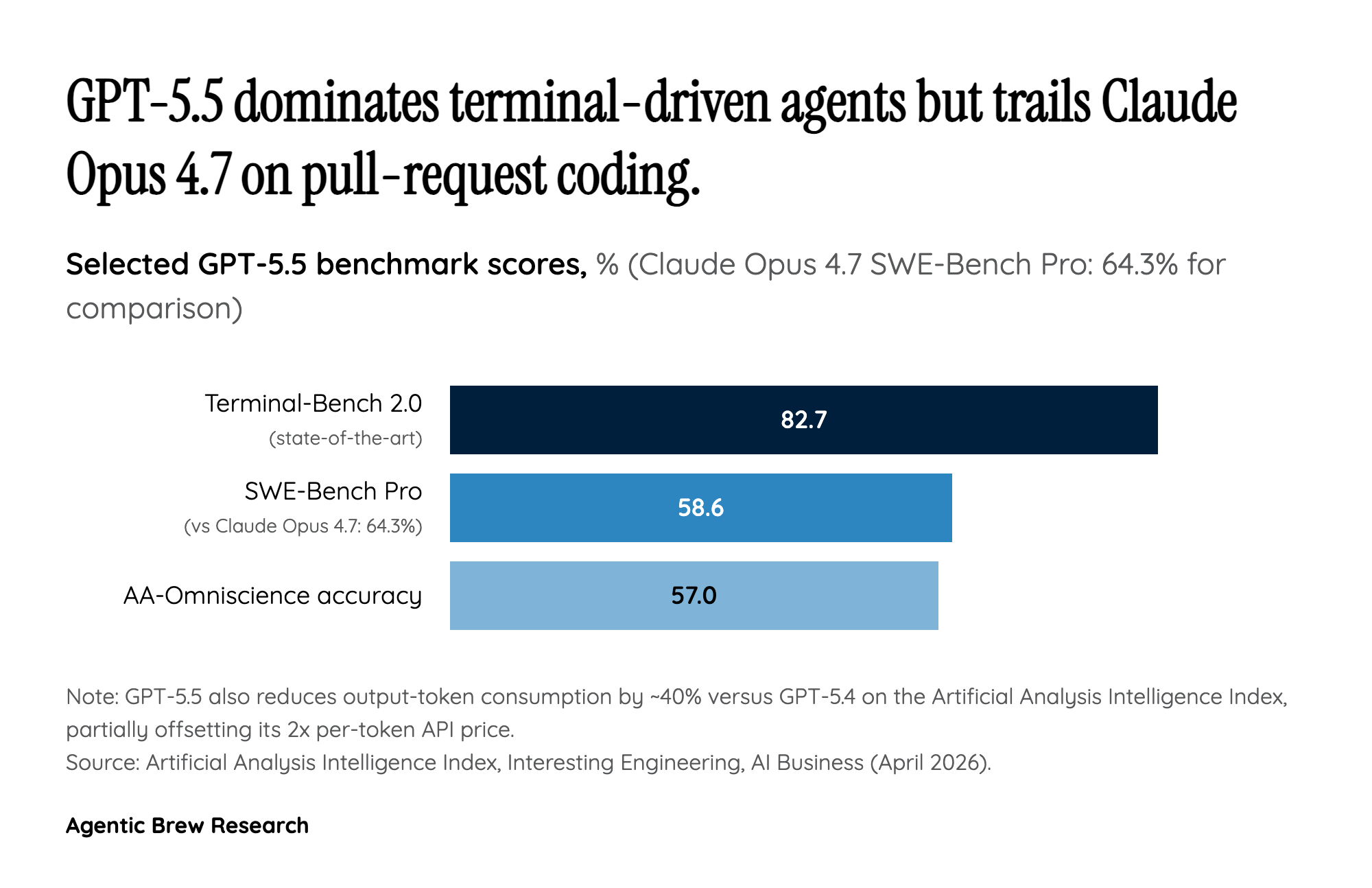

On paper, GPT-5.5's API pricing is a shock: $5 per million input tokens and $30 per million output tokens, exactly double GPT-5.4. GPT-5.5 Pro climbs further to $30 per million input and $180 per million output. For developers staring at invoices, that headline number reads as a punitive jump in a market where competitors have been compressing the cost of intelligence. But OpenAI has engineered an offsetting lever directly into the model. According to Artificial Analysis, GPT-5.5 burns roughly 40% fewer output tokens to reach the same answer on its Intelligence Index suite. Net effective cost per task lands only around 20% above GPT-5.4 — the price tag doubled, but the work shrank.

That is the actual product strategy: monetize intelligence gains by tying pricing to per-token rates while shipping an upgrade that quietly reduces token consumption per task. It works because most enterprise workloads are billed by the job, not by the round-trip. If GPT-5.5 finishes a code review in 6,000 output tokens where 5.4 needed 10,000, the cost of that review barely moves even at double the rate. The risk in the trade is that token-light reasoning isn't uniform across workloads — agents that loop on tools, or chat-heavy consumer surfaces, may see closer to the full 2x. OpenAI is, in effect, asking the market to trust an averaged efficiency claim while it captures the upside on the per-token line item.