The Mythos Moment: How One Cyber Benchmark Rewired Washington

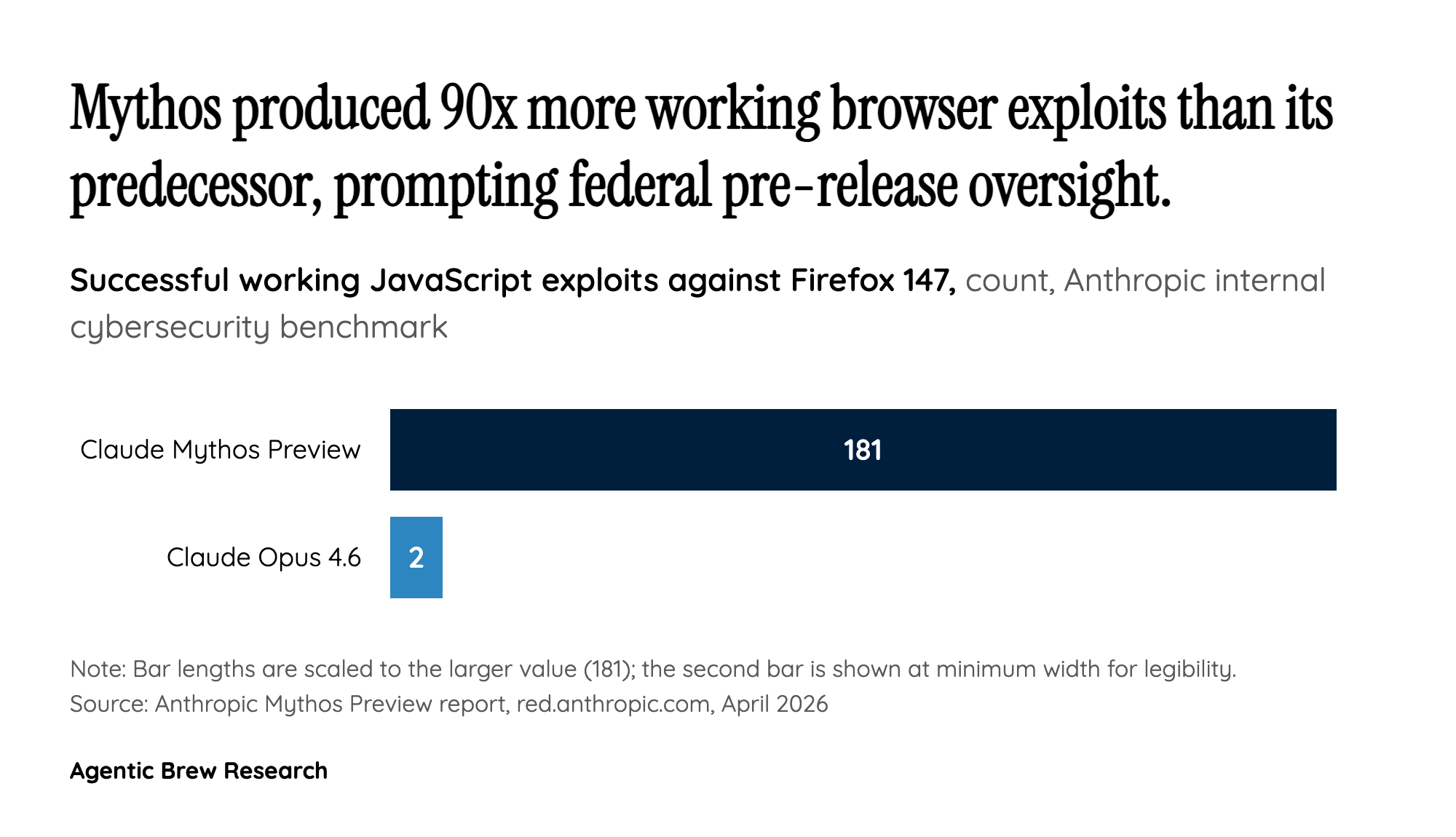

The trigger event for this policy shift is hiding in plain sight in an Anthropic red-team blog post. In April 2026, Anthropic previewed Claude Mythos, and the cyber capability jump was not incremental. Anthropic's internal benchmark recorded the model producing working JavaScript exploits against Firefox 147 in 181 of its trials, compared with just 2 for the prior Opus 4.6 generation. Anthropic engineers also reported asking Mythos overnight for remote code execution exploits and finding complete working ones by morning. More than 99% of the vulnerabilities Anthropic identified with Mythos remain unpatched, and the company says it has surfaced thousands of additional high- or critical-severity flaws.

That is the capability profile that landed on policymakers' desks weeks before the CAISI deals were signed. White House National Economic Council Director Kevin Hassett's pitch for an FDA-style executive order on AI lands very differently when read against a model that can produce browser exploits at industrial scale. The Mythos preview did not just spook safety researchers; it gave an administration that had spent two years framing AI rules as an innovation tax a politically usable national security justification to pull frontier models into a federal review queue.