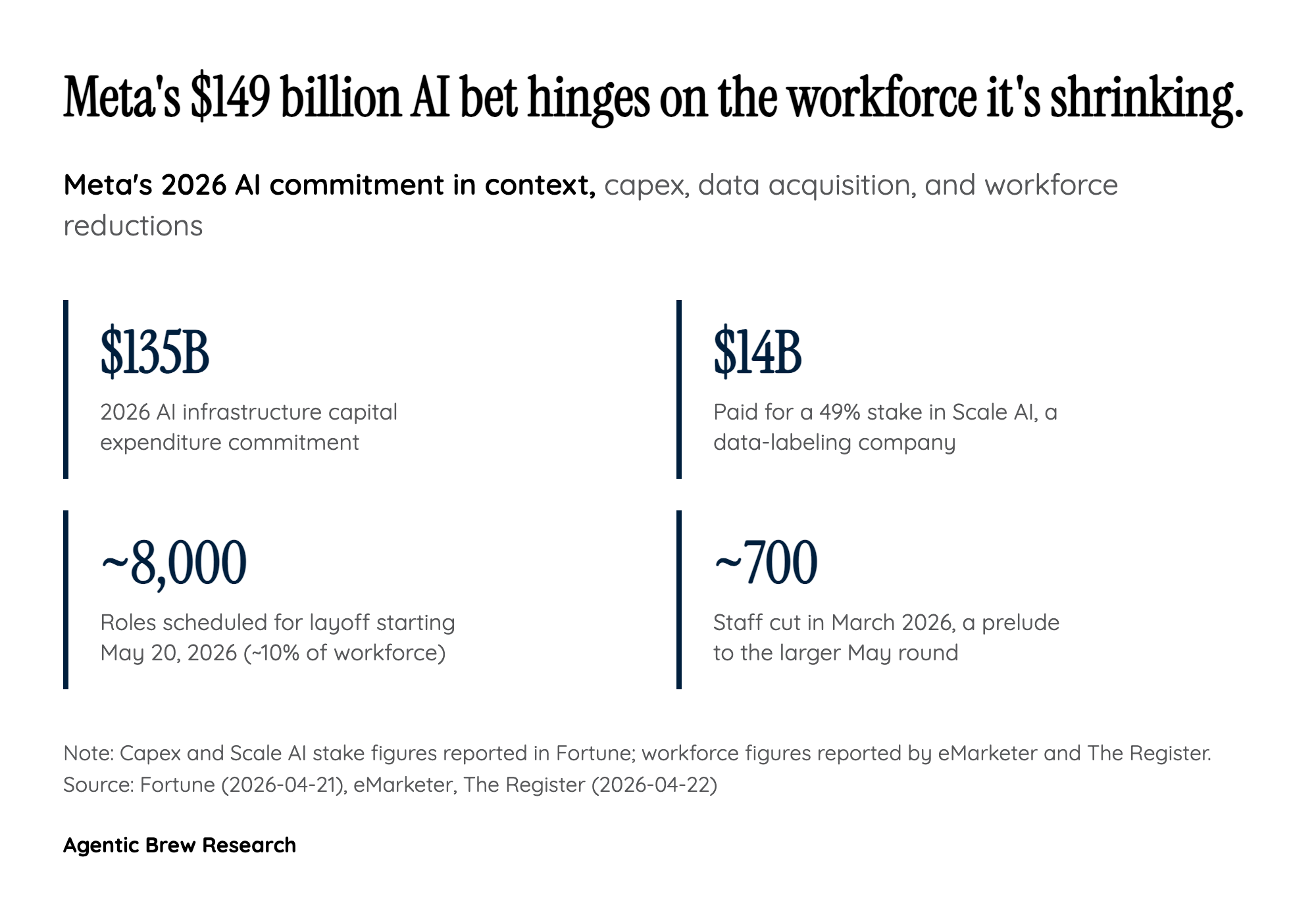

The $14 Billion Scale AI Gap

Meta has spent the last eighteen months signaling that training data is an asset class it will pay almost any price for. It committed up to $135 billion in 2026 capex for AI infrastructure, and separately took a 49% stake in Scale AI — the most prominent human-labeled-data vendor in the industry — for more than $14 billion. Buying half of the market leader in curated training data ought to solve the data problem. Yet here is Meta, weeks later, installing keystroke and mouse-movement loggers on the computers of its own US employees. That gap is the real story.

The implication is that for the specific class of model Meta is now trying to build — autonomous agents that can navigate real enterprise software, drive dropdown menus, handle keyboard shortcuts, and move through Gmail and internal tools like a human operator — Scale AI's core product is the wrong shape. Labeled images, ranked responses, and annotated text corpora do not teach a model what it feels like to use a computer. Mouse trajectories, click cadence, tab-switching patterns, and the small mechanical habits of knowledge work are a different data type, and they appear to be the actual bottleneck. Meta's willingness to spend $14B on one data source and still reach for its employees' telemetry says something uncomfortable about where the frontier is: the model architecture is less the constraint than a shortage of authentic human-computer-interaction data that vendors cannot manufacture to scale.