Why This Matters

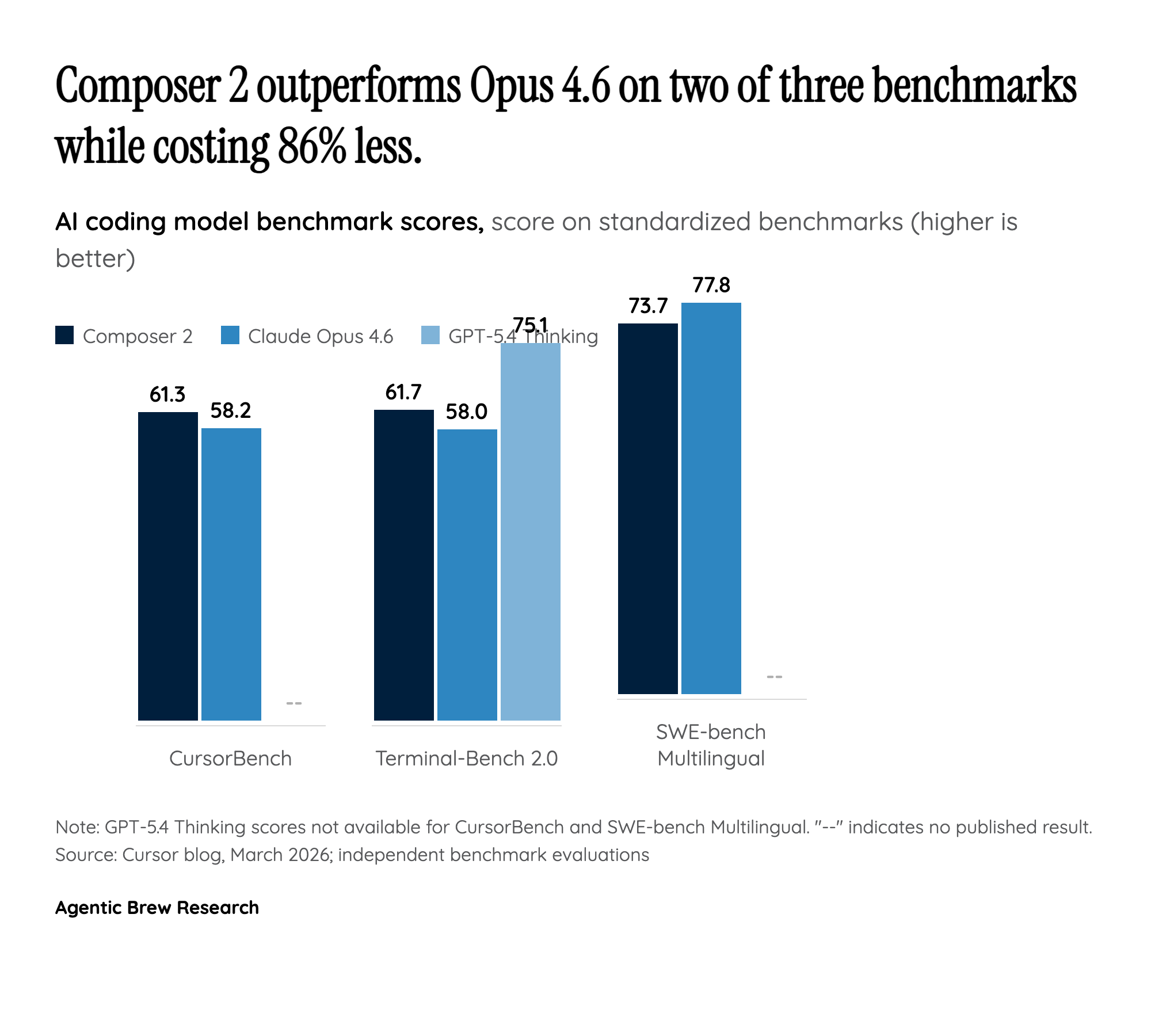

Composer 2 represents a watershed moment in the AI coding tools market because it demonstrates that a vertical application company can build a proprietary model that competes with — and in some cases surpasses — the general-purpose frontier models from Anthropic and OpenAI. This challenges the prevailing assumption that only large foundation model labs can produce best-in-class AI for specialized domains. Cursor's approach of taking an open-weight base model (Kimi K2.5) and applying domain-specific continued pretraining and reinforcement learning shows a viable third path between building from scratch and simply wrapping APIs.

The 86% cost reduction is equally significant. At $0.50/$2.50 per million tokens compared to Claude Opus 4.6 at $5/$25, Composer 2 fundamentally changes the economics of agentic coding. This matters because agentic workflows — where models take hundreds of sequential actions to complete complex coding tasks — consume enormous amounts of tokens. Lower per-token costs make it economically viable to let agents run longer, attempt more approaches, and tackle more ambitious tasks. For enterprise customers processing millions of coding requests daily, this translates to tens of millions of dollars in savings annually.