The Reclaim Clause: Musk Becomes His Rival's Informal Regulator

The most consequential line in the Anthropic-SpaceX agreement is not the GPU count or the megawatt figure, it is a single contractual clause: SpaceX reserves the right to reclaim the compute if Anthropic's AI engages in actions that 'harm humanity.' That sentence converts a vendor relationship into something stranger and more political. Anthropic, a company founded on the premise of careful, safety-first scaling, has just placed its production workloads on hardware whose continued availability is conditioned on the subjective judgment of Elon Musk, the CEO of a direct competitor. Independent researcher Simon Willison flagged this as a 'really bad look,' noting that the harm-determination authority is functionally vested in Musk himself. Gene Munster of Deepwater put 80% odds on the deal surviving two years, with the dissenting 20% pinned explicitly to Musk-specific risk.

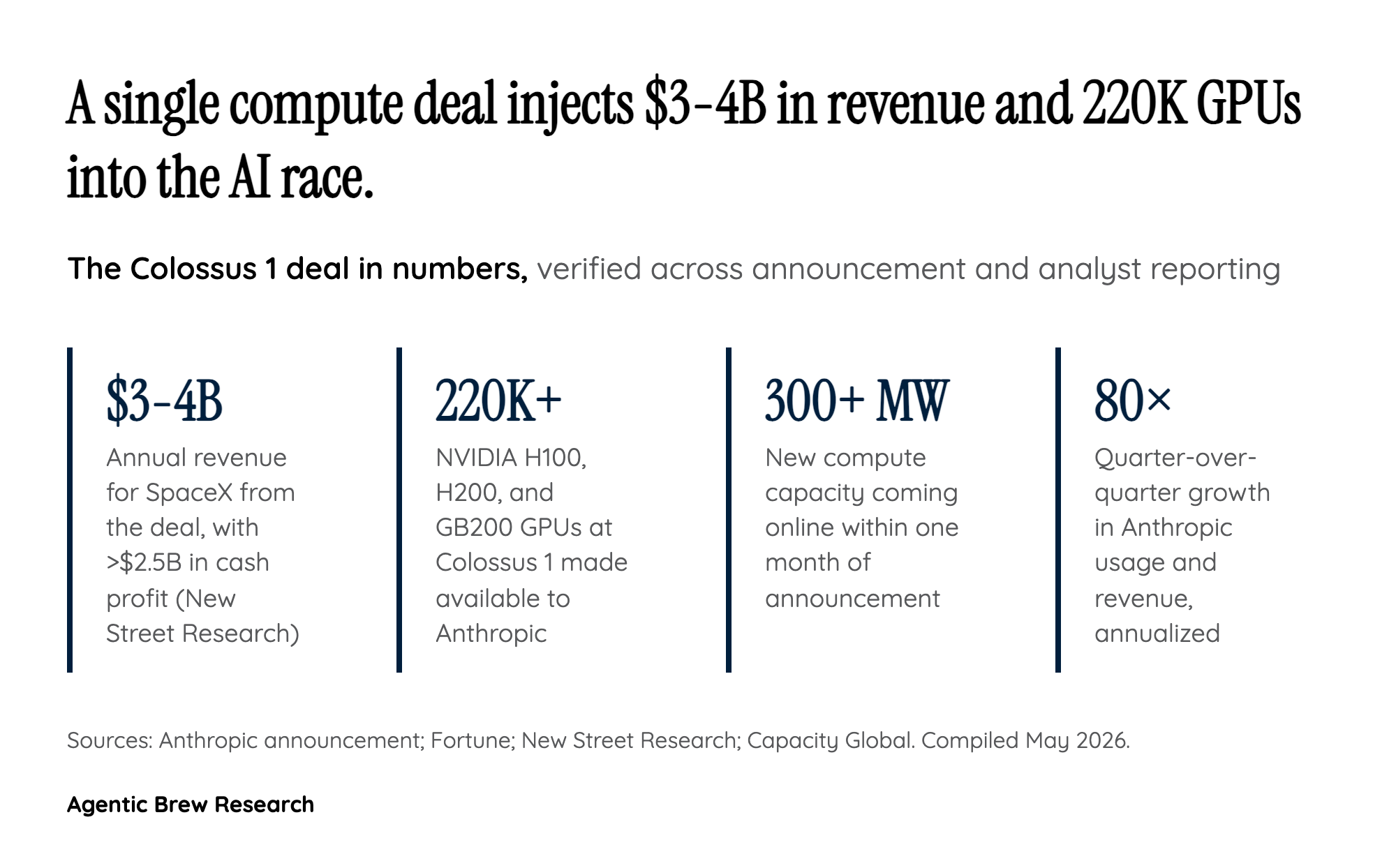

The practical implication is asymmetric leverage. If Anthropic ships a model behavior Musk dislikes, ranging from a refusal pattern, a political evaluation, or a competitive product move, the contract gives him pretextual grounds to throttle 300+ megawatts of his rival's serving infrastructure. This is the first major case where a frontier AI lab has agreed to compute terms that include a content-and-conduct termination right held by a competitor. Andrew Moore of Lovelace AI captured the structural point bluntly: 'He who controls the data center, really does control the application of artificial intelligence right now.' Anthropic has accepted that constraint in exchange for capacity it apparently could not source elsewhere on the same timeline.