Banned and Indispensable: The U.S. Government's Bifurcated Anthropic Posture

On May 1, 2026, the Pentagon awarded classified-network AI work to seven firms — OpenAI, Google, Microsoft, AWS, Nvidia, xAI, and Reflection — and pointedly left Anthropic off the list. Pentagon CTO Emil Michael told reporters that Anthropic remains a supply chain risk, the same harsh designation typically applied to firms with foreign-adversary ties. By any normal procurement logic, that should be the end of Anthropic's federal story for the foreseeable future.

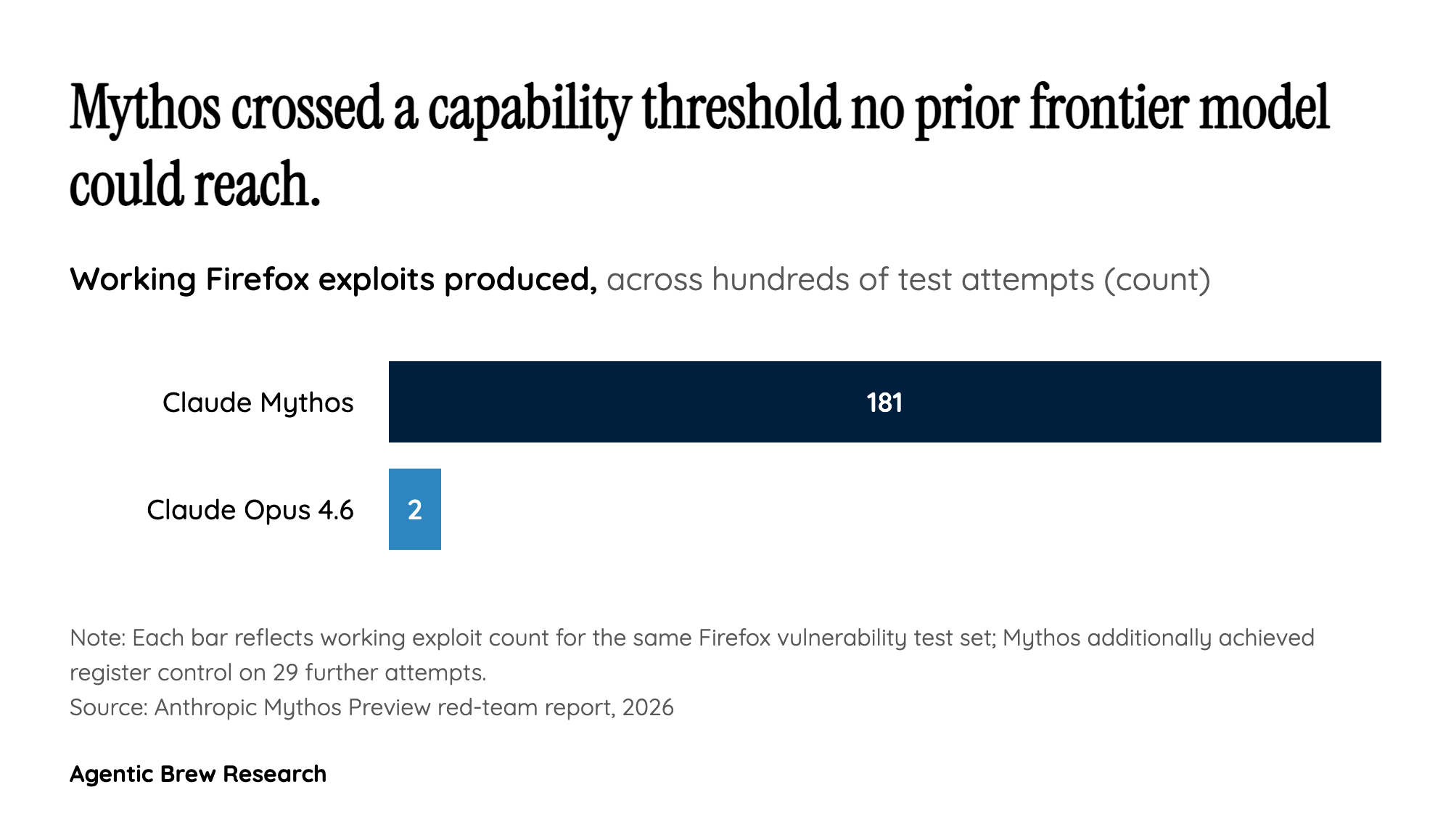

It isn't. The same reporting reveals the National Security Agency is already using Mythos despite the ban, and the Treasury Department has requested access. Pentagon CTO Michael himself separates Mythos from the rest of Anthropic's posture, calling it a 'separate national security moment.' The White House, having earlier blocked Anthropic's plan to expand Mythos access from roughly 50 to 120 organizations, is now drafting guidance to let agencies onboard Mythos around the supply-chain-risk designation. Treasury Secretary Scott Bessent and Fed Chair Jerome Powell have met with bank CEOs about Mythos's financial-system implications. The U.S. government's working stance is therefore that Anthropic is too dangerous to use, and Mythos is too dangerous not to.

That contradiction is the structural insight: federal procurement and national-security workflows are running on different operating systems, and Mythos is the wedge that exposes the gap. The seven-firm classified deal locks in Anthropic competitors for the foreseeable future even if the supply-chain-risk label gets struck. But the Mythos carve-out, if it lands, creates a precedent that capability-tier strategic models can route around standard procurement bans. That is a structural shift in how the federal government will buy frontier AI.