The verticalization bet: always-on reasoning aimed at lawyers and CFOs, not Leetcode

Grok 4.3's headline architectural choice is that every request now runs through a reasoning pass before answering, with no toggle to skip it. Paired with a 1 million token context window, that's a deliberate posture: xAI wants the model judged on how well it digests entire case files, 10-Ks, and contracts rather than how cleanly it solves a one-shot coding prompt.

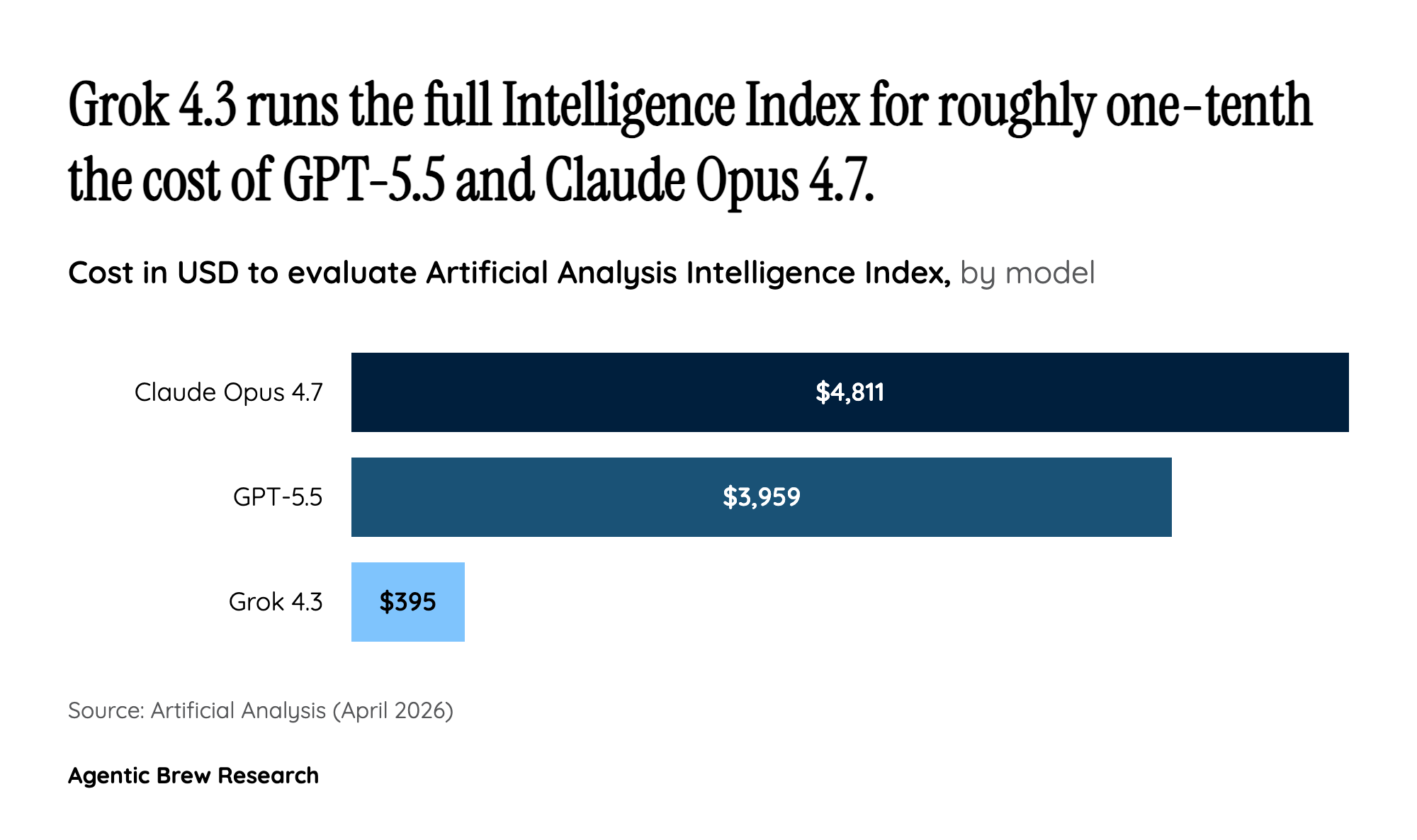

The benchmark scoreboard ratifies that bet. Vals AI ranks Grok 4.3 #1 on CaseLaw v2 (79.3%) and #1 on CorpFin v2 (68.5%), while landing it only #13 on the broader Vals Index, with Vals itself flagging that 'it struggles on general coding benchmarks.' Artificial Analysis adds a second confirmation: a 321-point GDPval-AA ELO jump from 1,179 to 1,500, the largest agentic uplift in xAI's lineup. Translated, xAI is conceding the general-purpose crown for now and competing where long-document reasoning, citations, and tool-use accuracy actually monetize.