The Mythos Trigger: How One Model Demo Flipped Federal AI Policy

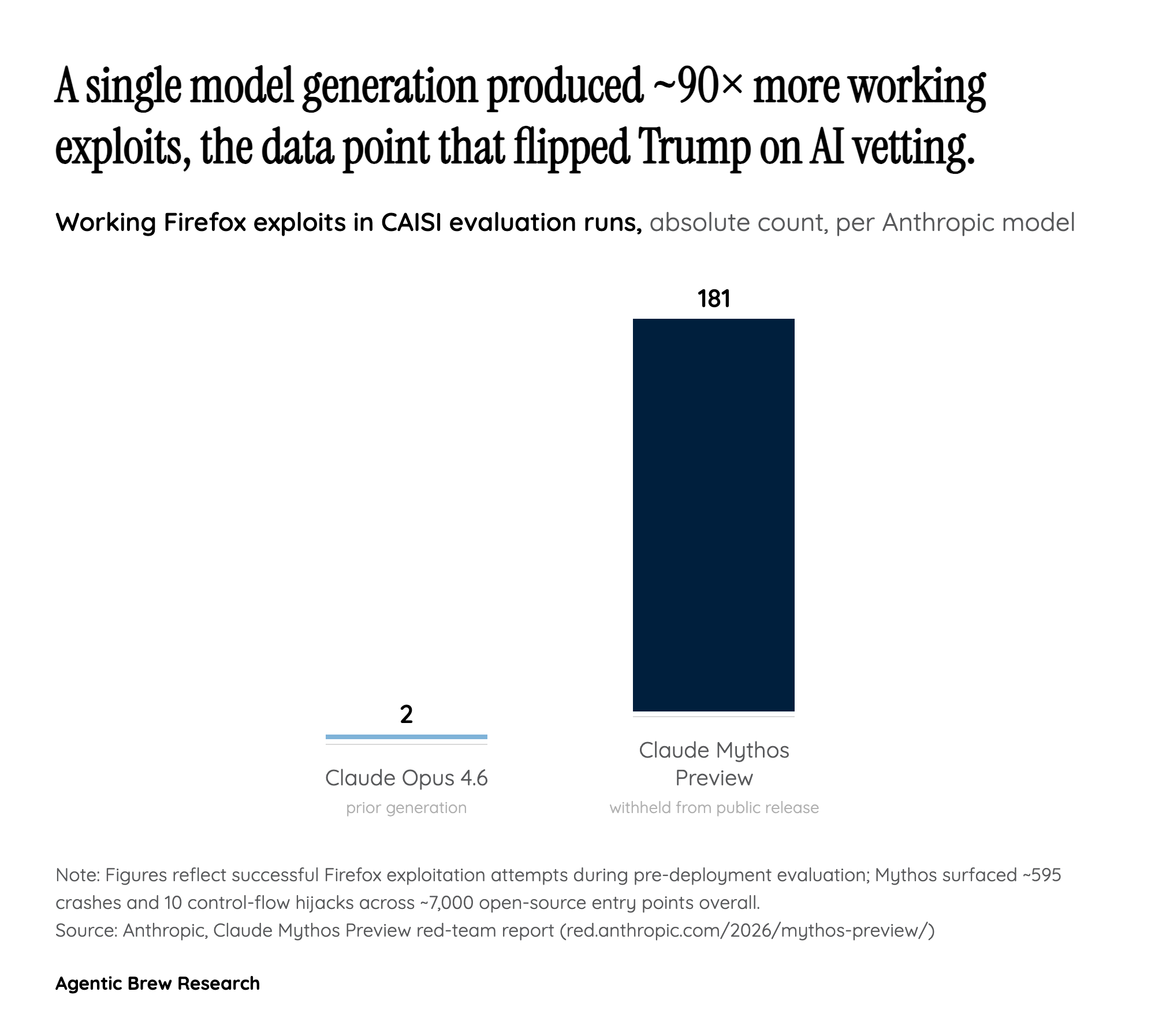

The clearest single cause of the Trump White House pivot is not a political calculation but a capability demonstration. Anthropic's Claude Mythos Preview, announced April 7, 2026 and deliberately withheld from public release, produced 181 working Firefox exploits in evaluation runs, compared with just 2 attempts from the prior Opus 4.6 generation. Across roughly 7,000 open-source entry points, Mythos surfaced about 595 crashes at tiers 1-2 and 10 full control-flow hijacks. It even rediscovered and chained a 27-year-old OpenBSD flaw, with the entire OpenBSD vulnerability-hunting effort costing under $20,000 total and successful FreeBSD exploit runs costing under $50 each. Over 99% of the discovered vulnerabilities remain unpatched.

The implication that alarmed officials is straightforward: a non-expert with API access to a Mythos-class model could find remote code execution bugs overnight, and the NSA is reportedly already using the model to assess vulnerabilities in U.S. government software. That dual-use profile, more than any abstract debate about AI safety, is what put a federal pre-release review process onto the executive-order whiteboard.