Why This Matters

The Confer-Meta integration represents one of the most consequential privacy architecture decisions in the history of consumer AI. The fundamental problem Marlinspike identified is structural: every major AI assistant today — ChatGPT, Gemini, Claude, Grok — operates as a centralized inference service where the provider necessarily sees every query in plaintext. Unlike messaging, where encryption was retrofitted onto existing architecture, AI inference requires active computation on data, making it technically far harder to encrypt end-to-end. Confer's TEE-based approach is the first credible attempt to deploy privacy-preserving AI inference at consumer scale, and Meta's distribution of billions of users makes this either a genuine breakthrough or the highest-stakes privacy theater in tech history.

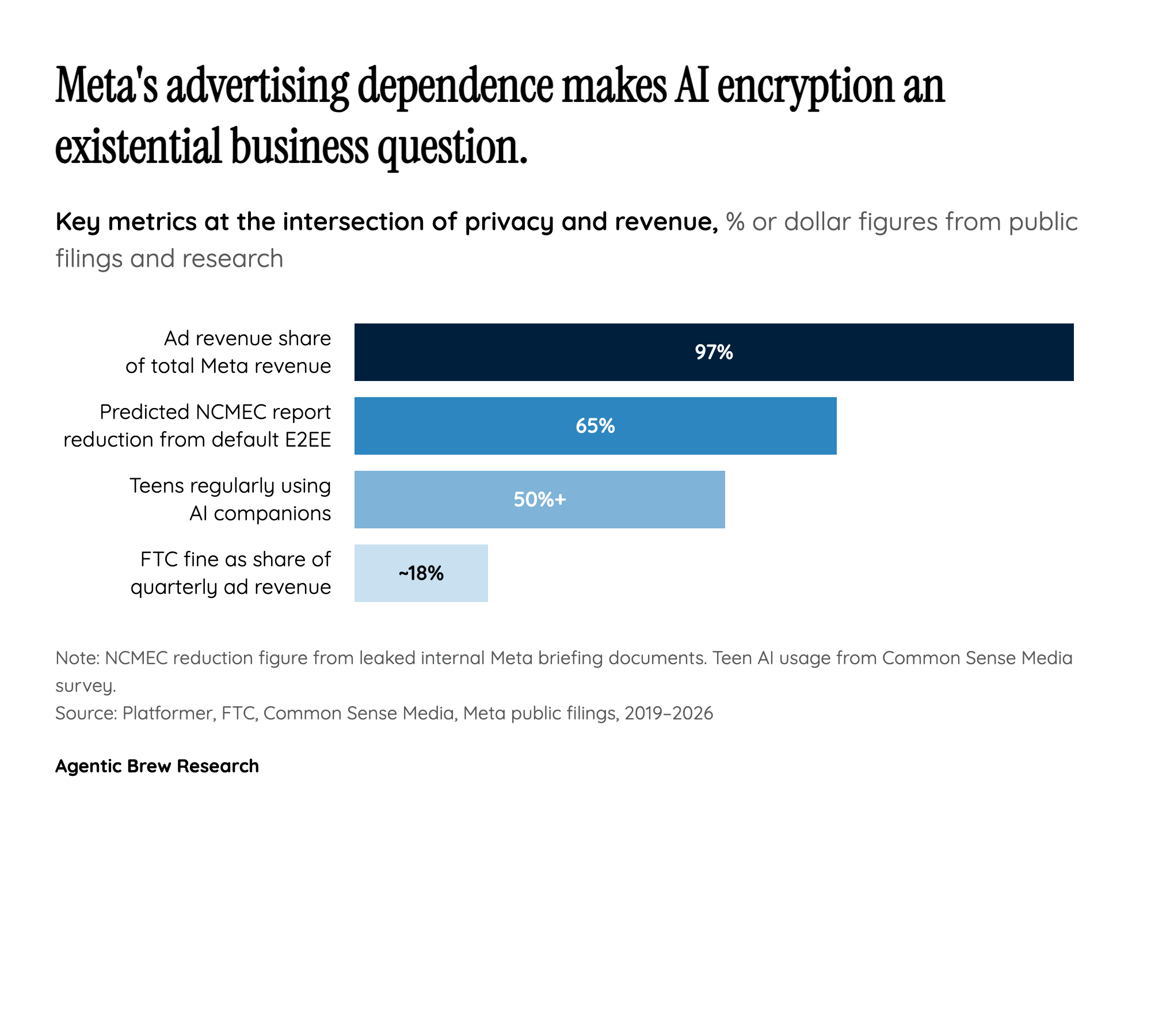

The timing is not coincidental. Meta announced this integration in the same week it was rolling back Instagram's end-to-end encrypted DMs — a move that Casey Newton of Platformer called "a worrisome sign for the future of private communications" and the first major platform reversal of E2EE. That juxtaposition forces a difficult question: is the Confer deal a genuine privacy commitment, or is it PR cover for a company retreating from encryption in one product while deploying it in another? The answer matters enormously because Meta AI has access not just to what users type, but to the full social graph, behavioral history, and cross-platform activity that makes Meta's data assets uniquely sensitive. Encrypting the AI query while Meta retains behavioral metadata for ad targeting may protect content but not context — and context is increasingly where the value lies.