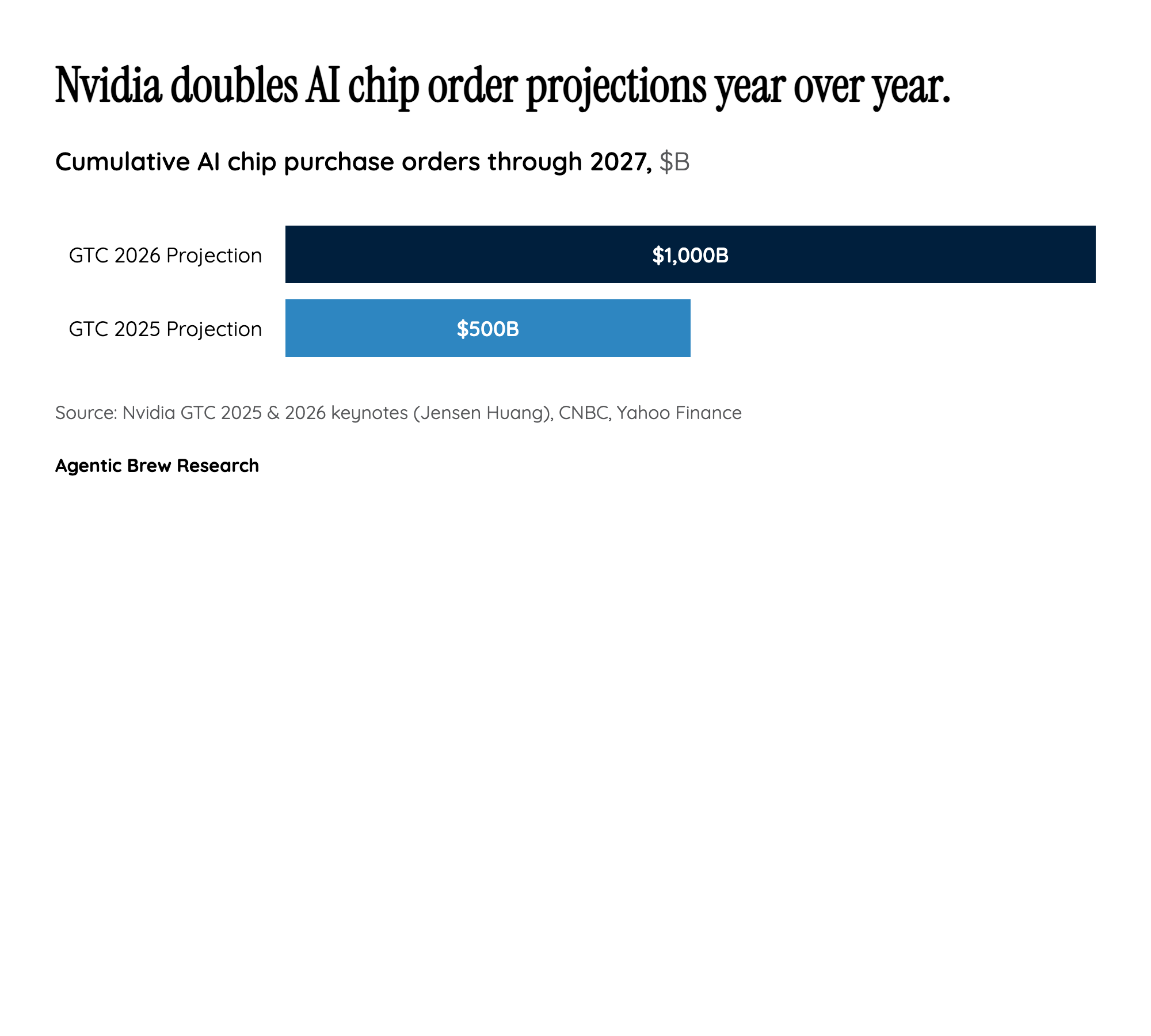

Why This Matters

Nvidia's $1 trillion projection is not merely a forecast — it is a demand signal that reshapes capital allocation across the entire technology industry. When the world's dominant AI chip supplier doubles its cumulative order estimate in a single year, it forces hyperscalers, sovereign AI programs, and enterprise buyers to recalibrate procurement timelines and infrastructure budgets. The projection implies that AI compute demand is not decelerating post-training-boom but actually accelerating as inference workloads — powering agents, retrieval systems, and real-time applications — begin to dwarf training in total FLOP consumption.

The strategic incentive structure is clear: Nvidia is positioning itself as the indispensable infrastructure layer for an inference-dominated era. By acquiring Groq and integrating its LPU technology, Nvidia preemptively neutralized a potential competitive threat in low-latency inference while adding a differentiated product line. The OpenClaw strategy further deepens lock-in by making Nvidia's platform the default runtime for open-source AI models, creating a flywheel where developer adoption drives hardware demand. For hyperscalers like AWS (1M+ GPUs deployed) and Microsoft Azure, the cost of not being on Nvidia's roadmap now exceeds the cost of dependency.