Why This Matters

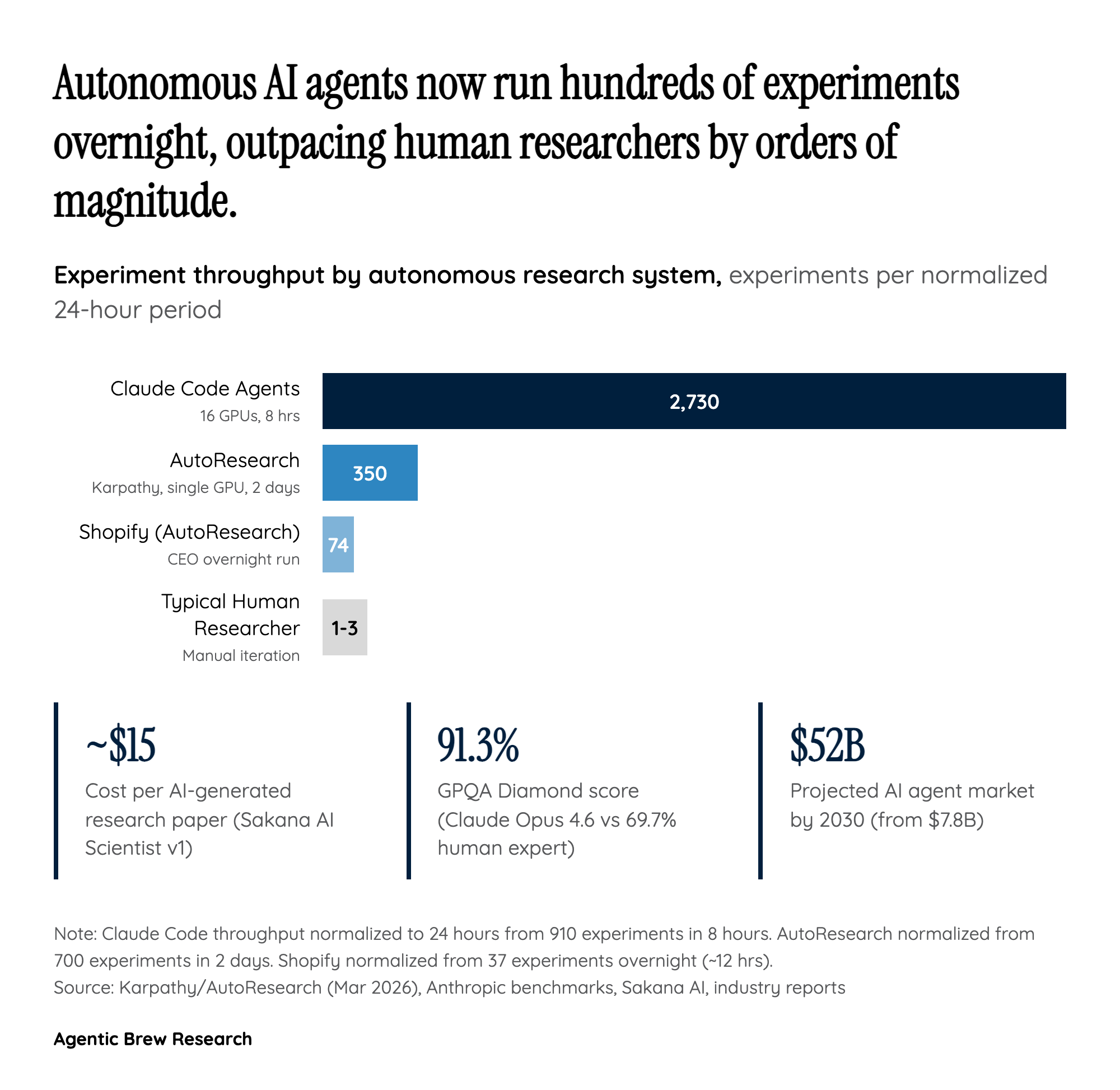

The automation of scientific research represents one of the most consequential applications of AI -- not because it replaces scientists, but because it fundamentally changes the economics and speed of discovery. When Karpathy's AutoResearch ran 700 experiments in 2 days on a single GPU, it did not just demonstrate a productivity tool; it showed that the bottleneck in ML research is no longer compute or ideas, but the human capacity to iterate. A single researcher can now explore a search space that would have taken a team months to traverse.

The implications cascade across every knowledge-intensive industry. Drug discovery timelines, which typically span 10-15 years and cost over $2 billion per approved compound, could compress dramatically when AI agents can autonomously screen literature, generate hypotheses, design experiments, and interpret results. FutureHouse has already identified a therapeutic candidate for age-related macular degeneration using this approach. Materials science, climate modeling, and fundamental physics all face similar acceleration potential. OpenAI's decision to make autonomous research its 'North Star' -- and to project $125B+ in annual revenue around it -- signals that the largest AI lab in the world believes this is not a niche application but the primary value proposition of advanced AI.