When Power Users Mutiny

Two stories about Claude Code 4.7 are running on parallel tracks, and they barely touch. On one track, non-coders post videos of an agent building a working mobile app from a paragraph of plain English; the framing is liberation, the screenshot count is high, and the takeaway is that a $350K developer's worth of work just got compressed into minutes. On the other track — the one inhabited by people who have shipped against Claude Code every day for the past year — the reception is openly hostile.

The heavy-use Reddit threads from the past week tell a remarkably consistent story. One top thread argued, with a concrete example, that 4.7 silently ignored a plan document the user had handed it; reverting to 4.6 produced a response that explicitly called out the failure. A widely-upvoted heavy-use review from a long-time user characterized 4.7 as more confident and more wrong: better at instruction following on simple cases, but more likely to give an authoritative-sounding answer that turns out, on inspection, to be incorrect. The most-engaged X post from the same window is a public defection: a user who had been on Claude Code for thirteen months announcing the switch to Codex, citing slowness, bugs, and a belief that the model has been quietly nerfed.

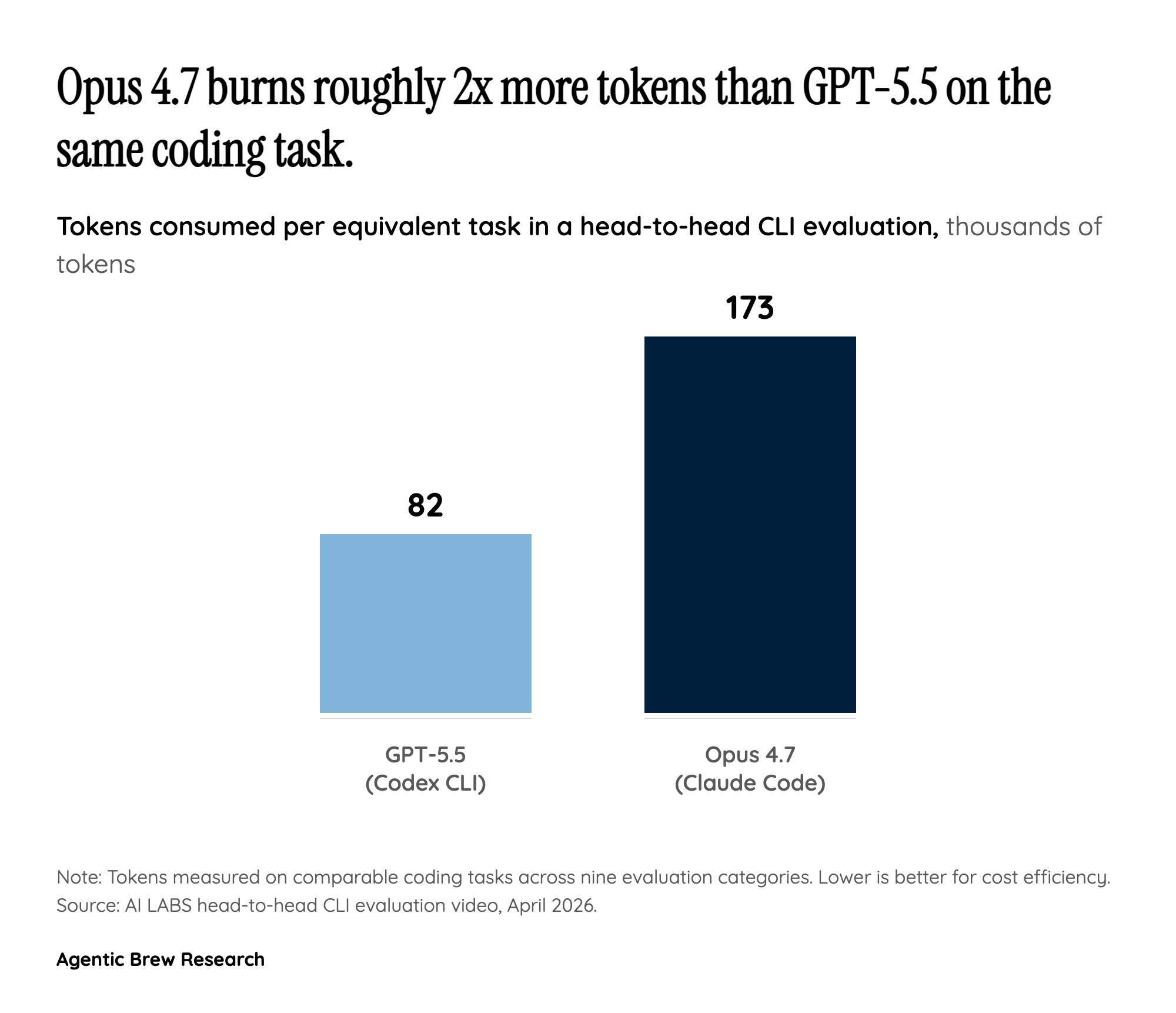

The split isn't random. The non-coder hype runs on first-run wow moments — a one-shot prompt that produces a working artifact. The power-user revolt runs on the long tail — what happens on prompt #40 of a week-long project, when the agent is asked to integrate with an existing plan, respect uncommitted work, and not regress earlier behavior. Anthropic shipped a model tuned hard for the first kind of demo and shipped it during a quarter when its rivals are tuning hard for the second.