Why This Matters

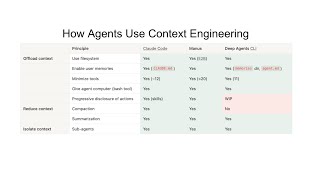

Context engineering represents a paradigm shift in how AI agents are built and optimized. Where prompt engineering focused on crafting better instructions to a model, context engineering encompasses the entire information environment surrounding the model. As Anthropic defines it, context engineering is "the art and science of curating what will go into the limited context window from that constantly evolving universe of possible information." This shift matters because it decouples agent performance from model capability: LangChain proved this by lifting Terminal Bench scores from 52.8% to 66.5% through harness improvements alone, without changing the underlying model.

The economic stakes are massive. Gartner recorded a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025, and the AI agent market is projected to grow from $7.8 billion to over $52 billion by 2030. With 57.3% of organizations already running agents in production and 95% of engineers using AI tools weekly, this is not an emerging trend but a structural transformation already underway. Bloomberg reported that AI coding agents like Claude Code are fueling a "productivity panic" in the tech industry, as engineers who master agent orchestration can produce output previously requiring entire teams. Garry Tan's demonstration of averaging 10,000 lines of code and 100 pull requests per week using GStack illustrates the magnitude of this productivity shift.