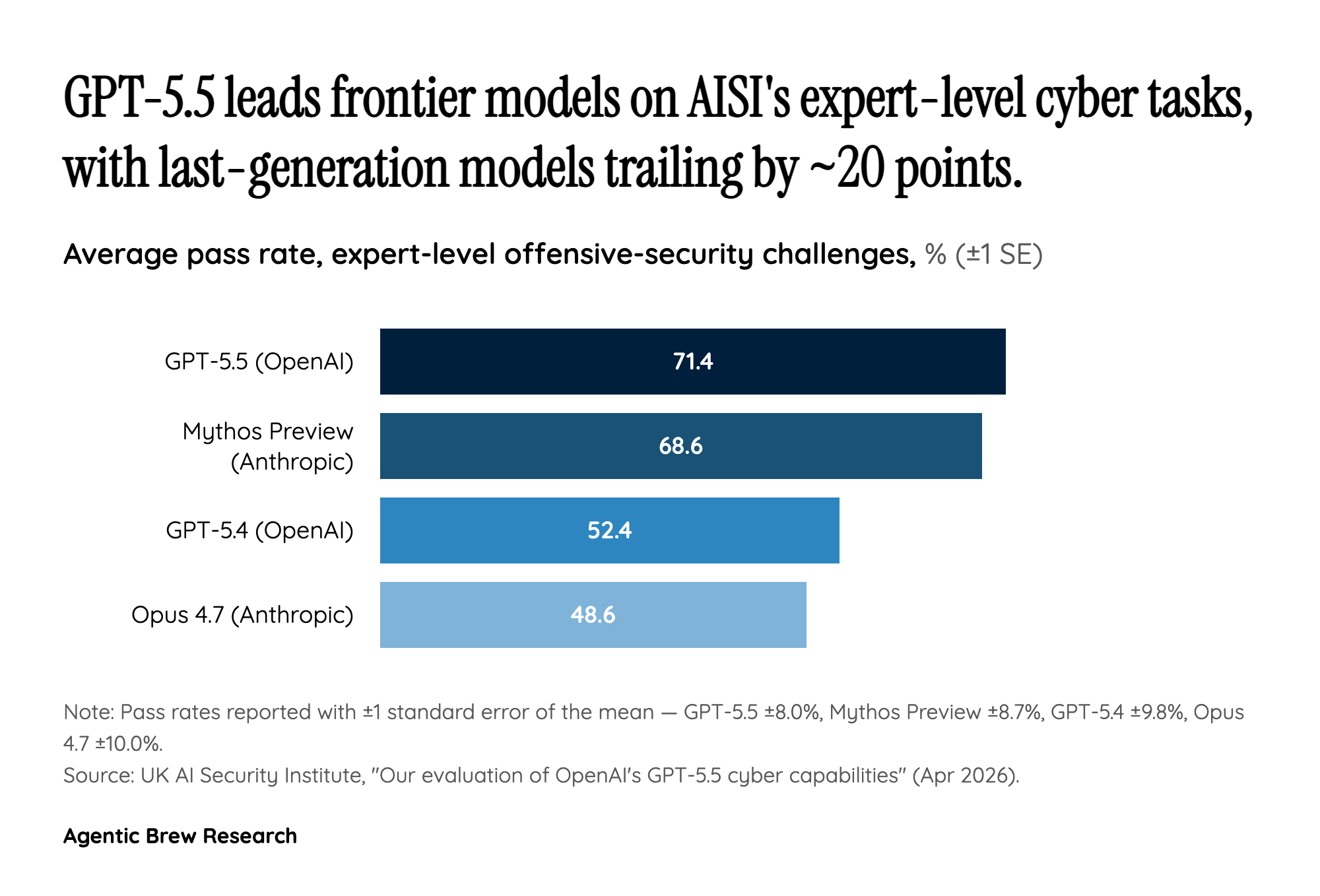

Two weeks, two frontier models, one cyber range that didn't exist as a benchmark a month ago

The headline isn't that GPT-5.5 cleared 'The Last Ones.' It's the cadence. AISI's 32-step corporate-network simulation went from 'no model has ever finished it' to 'two distinct frontier labs have finished it' in roughly ten days. Anthropic's Claude Mythos Preview cleared TLO end-to-end on April 14 in 3 of 10 attempts. On April 24, OpenAI shipped GPT-5.5 with a 2 of 10 success rate on the same range. That is not a slow asymptote. That is the curve bending at a pace that outruns the publication cycle of the evaluations themselves.

What makes the cadence consequential is that TLO is not a toy benchmark. AISI estimates the full chain takes a human expert about 20 hours and spans four subnets and roughly twenty hosts. The fact that two unrelated training runs converged on solving it within the same fortnight suggests the underlying capability — multi-step planning under noisy network feedback — is now a generalizable property of frontier post-training, not a bespoke trick. Defenders should plan budgets and red-team cadence accordingly: the 'first model to do X in cyber' headline is going to keep arriving, and the gap between first and second is collapsing toward the patch cycle.