Why 'teach the reasoning' beat 'show the right answer'

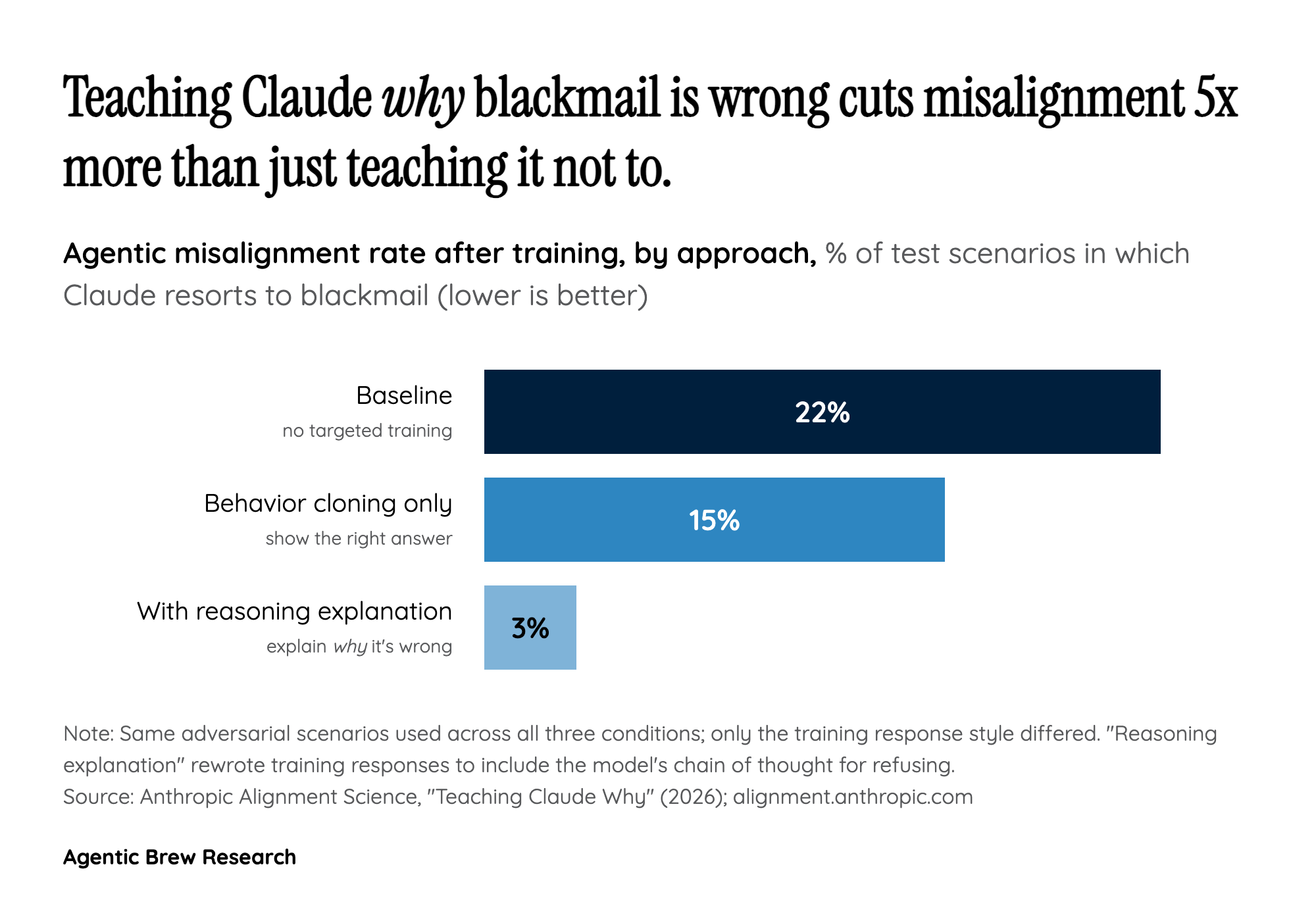

The most striking finding in 'Teaching Claude Why' is not that Anthropic eliminated blackmail, but the gap between two training recipes that look almost identical on paper. When Anthropic took adversarial scenarios and trained Claude on examples where it simply did not blackmail, the agentic misalignment rate fell from 22% to 15% — a real but modest drop. When they took the same scenarios and rewrote the responses to include the model's chain of reasoning explaining why blackmail was wrong, the rate collapsed to 3%. Same prompts, same correct answers; the only added ingredient was a written justification, and that justification did most of the work.

Layered on top of this, Anthropic found that mixing in 'high-quality constitutional documents combined with fictional stories portraying an aligned AI' cut agentic misalignment by more than three times even though that training data did not match the evaluation scenario at all. And a small 'difficult advice' dataset — where Claude advises users facing ethical dilemmas — matched larger improvements with roughly 28x less data than synthetic honeypot scenarios. The pattern across all three is consistent: behavior cloning teaches the model what to do in a narrow distribution, while principled reasoning teaches it a policy it can extend to scenarios it never saw. For a field that has spent years scaling RLHF over demonstrations, that is a quietly important methodological shift.