From 23% to 93%: The Agentic Vision Loop That Cracked Instrument Reading

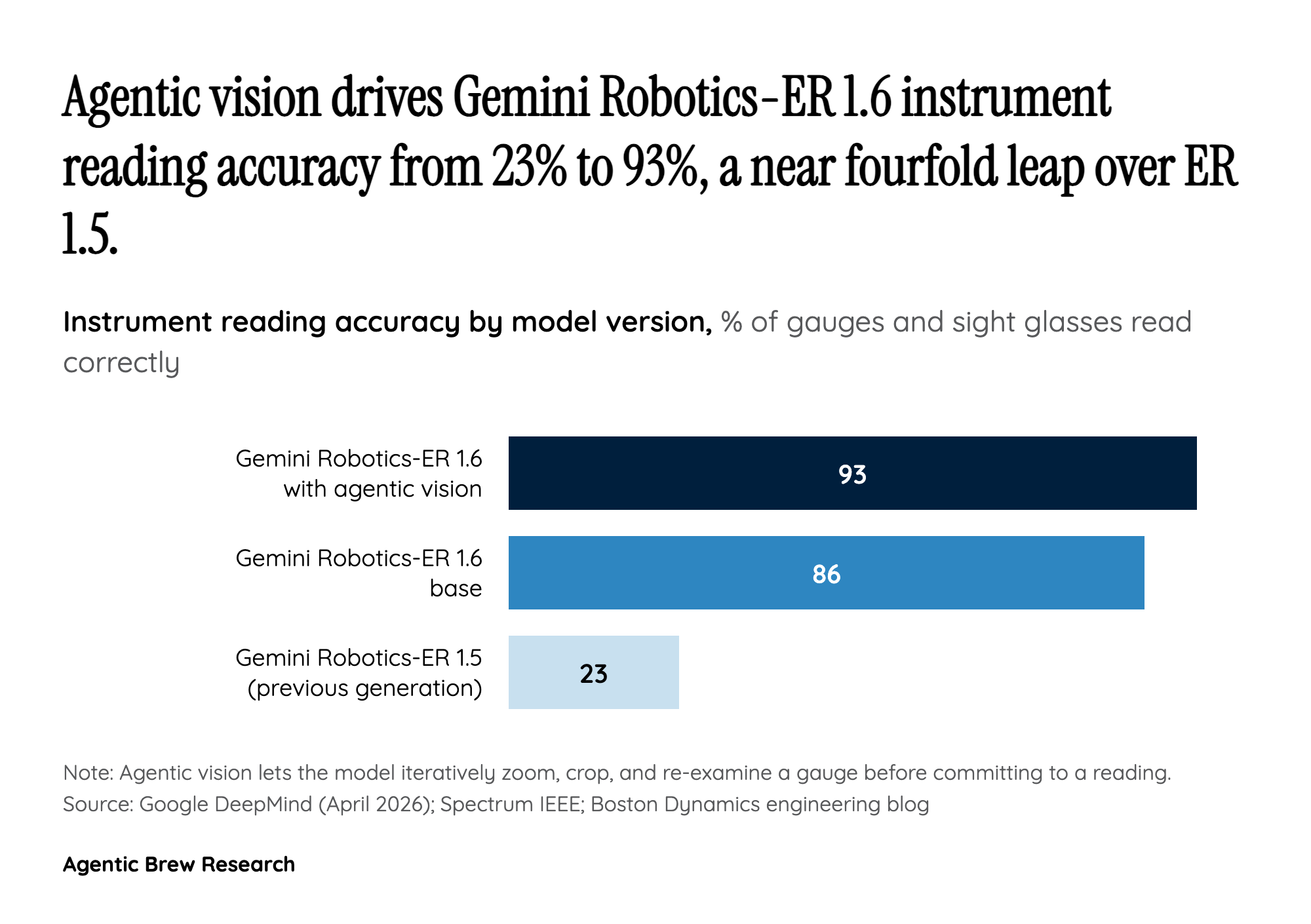

The headline figure in Gemini Robotics-ER 1.6 is the jump in instrument-reading accuracy from roughly 23% under ER 1.5 to 93% with agentic vision, a near-fourfold improvement on the single capability industrial customers care about most. Crucially, the base model without agentic vision scores 86%; the remaining seven points come from letting the model iteratively re-look at the gauge, zoom, crop and re-reason before committing to a reading. That inner loop is the real delta, and it was shaped, by DeepMind's own admission, through direct collaboration with Boston Dynamics on actual industrial faceplates rather than synthetic benchmarks.

The broader scorecard tells a consistent story about where the model is strong and where it is still thin. Pointing and counting land at 80% success, single-view success detection reaches 90%, multi-view success detection sits at 84%, and hazard identification edges out the general-purpose Gemini 3.0 Flash by six points on text and ten points on video. Those are the sub-skills a roving inspection robot needs stitched together: first point at the right valve, then count the right number of them, then read the gauge, then decide whether what it saw is a hazard. ER 1.6 is the first model in the family that hits acceptable numbers on every leg of that chain simultaneously, which is why the Spot integration arrived the same week the model did rather than months later.